Abstract

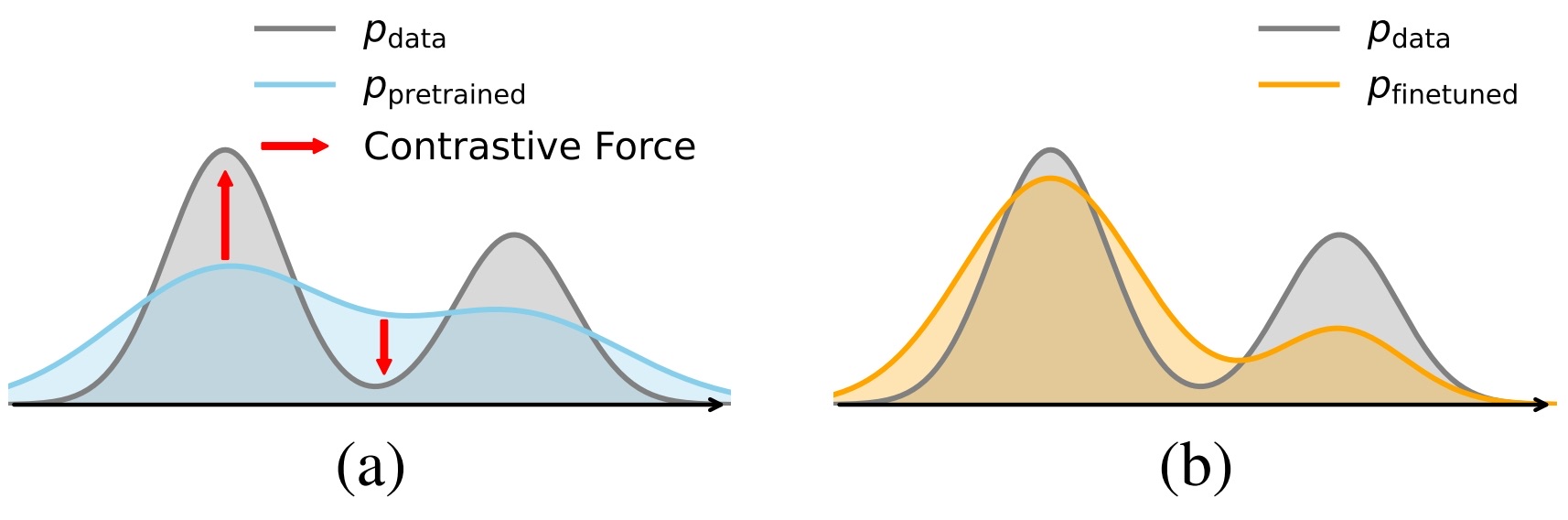

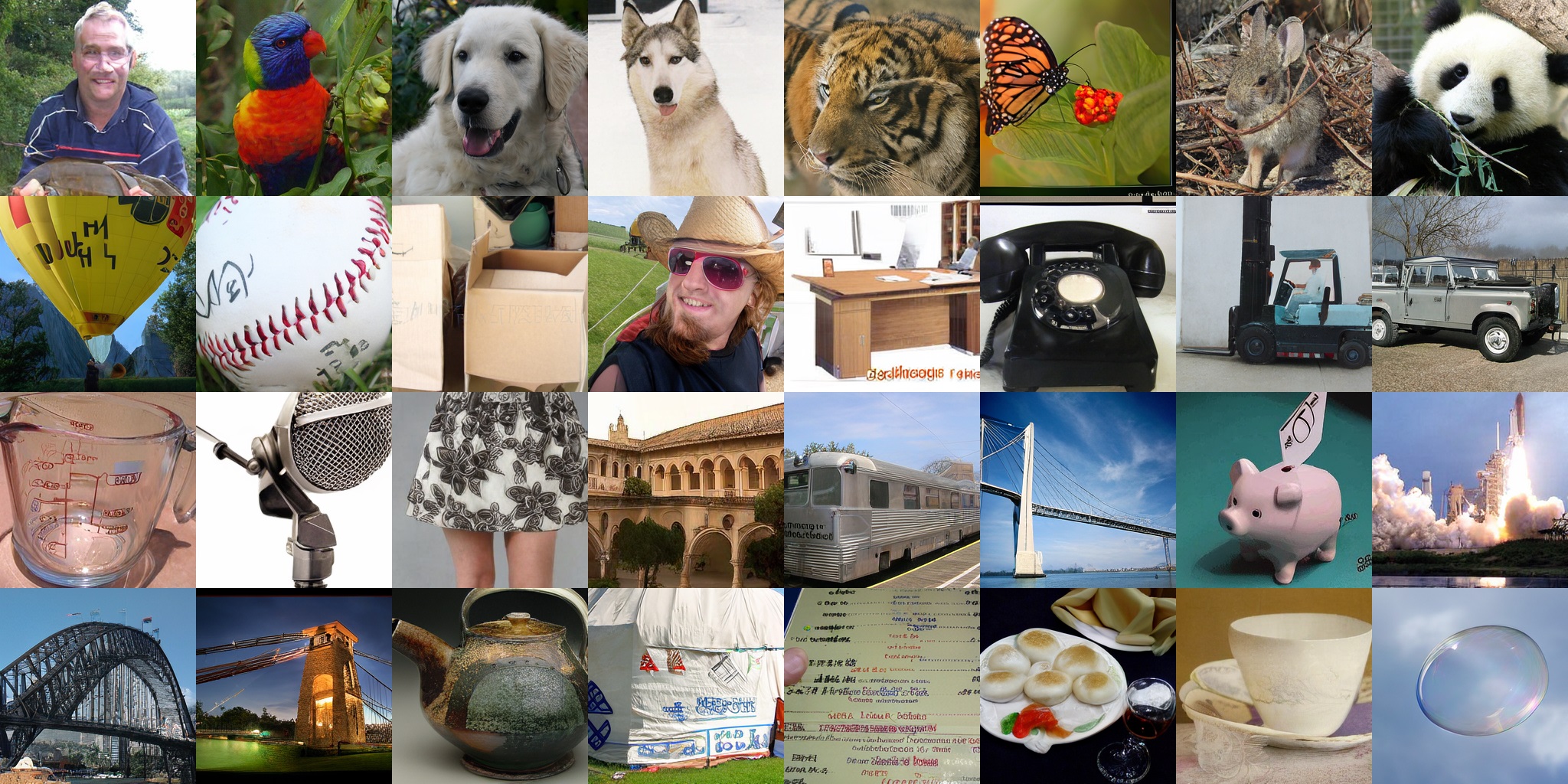

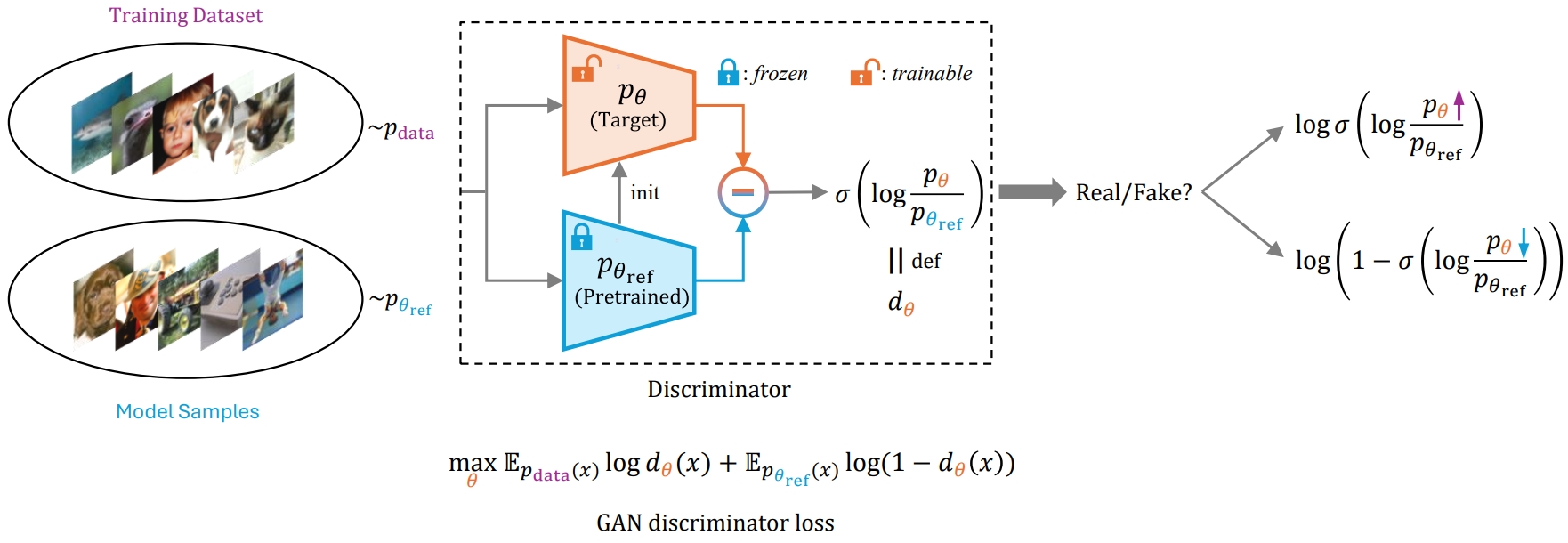

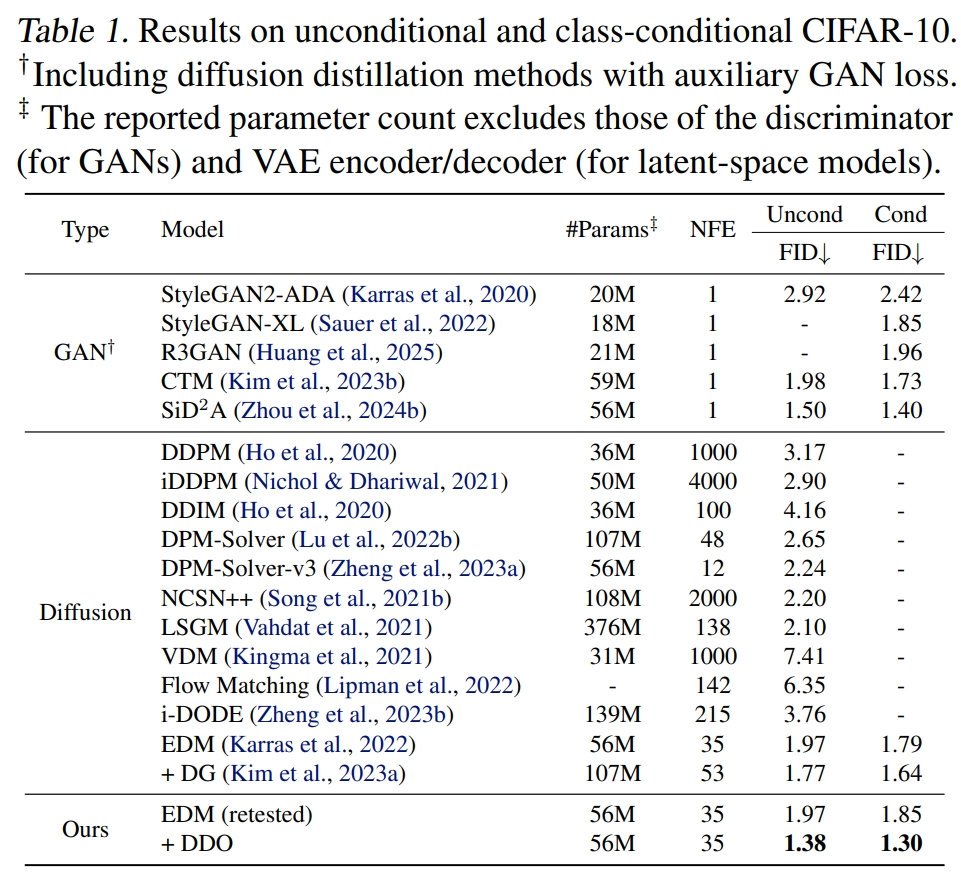

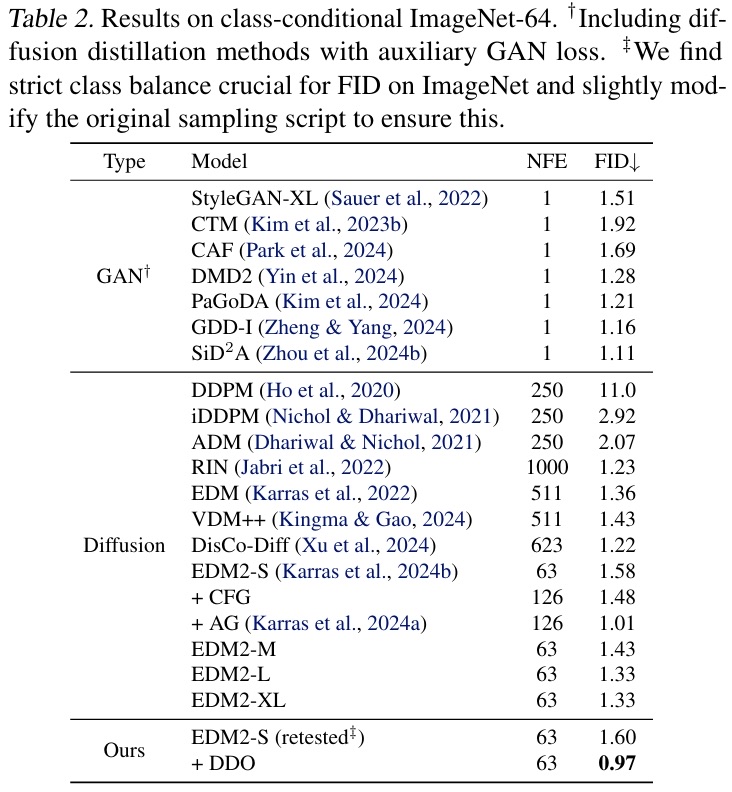

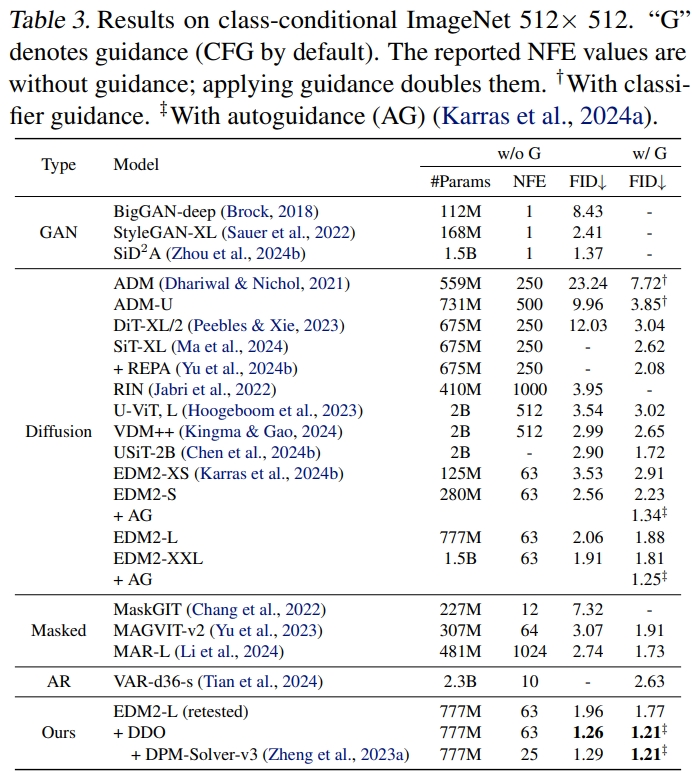

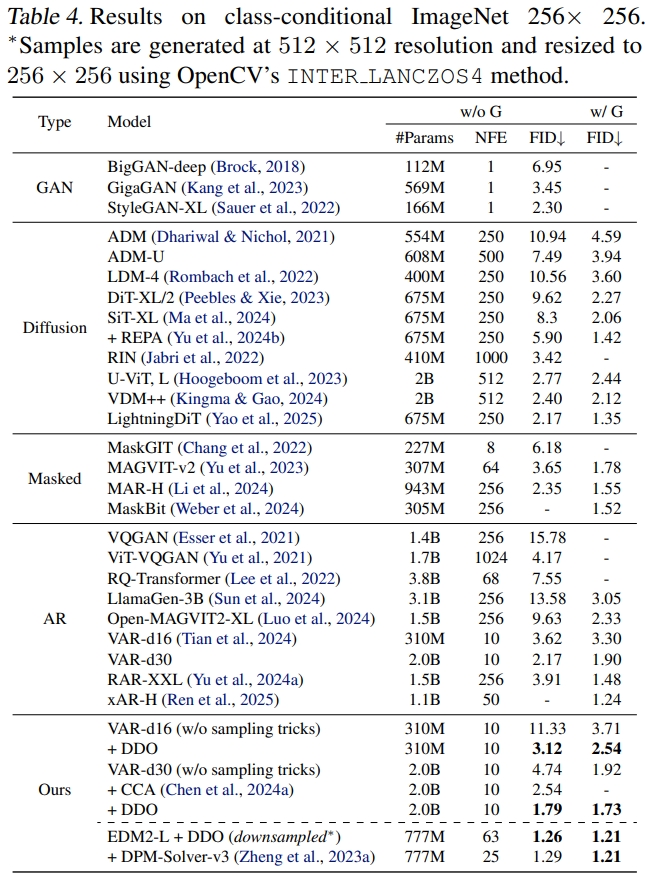

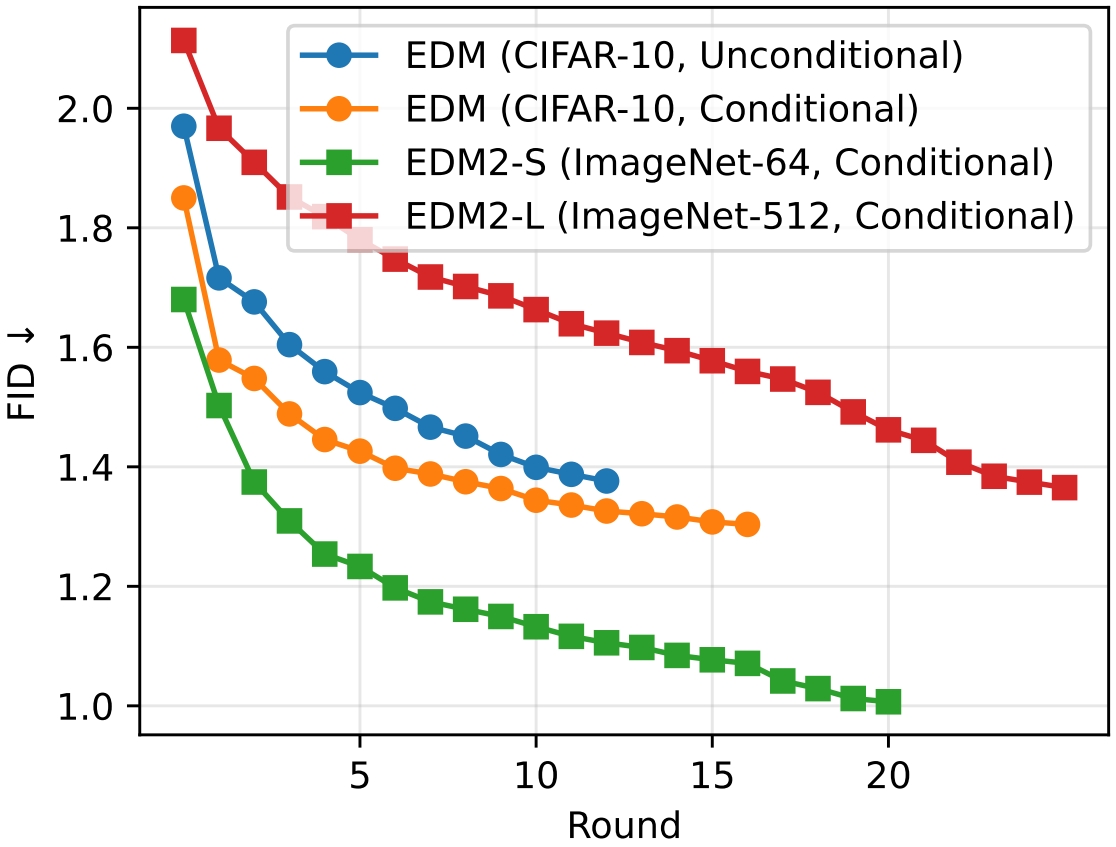

While likelihood-based generative models, particularly diffusion and autoregressive models, have achieved remarkable fidelity in visual generation, the maximum likelihood estimation (MLE) objective, which minimizes the forward KL divergence, inherently suffers from a mode-covering tendency that limits the generation quality under limited model capacity. In this work, we propose Direct Discriminative Optimization (DDO) as a unified framework that integrates likelihood-based generative training and GAN-type discrimination to bypass this fundamental constraint by exploiting reverse KL and self-generated negative signals. Our key insight is to parameterize a discriminator implicitly using the likelihood ratio between a learnable target model and a fixed reference model, drawing parallels with the philosophy of Direct Preference Optimization (DPO). Unlike GANs, this parameterization eliminates the need for joint training of generator and discriminator networks, allowing for direct, efficient, and effective finetuning of a well-trained model to its full potential beyond the limits of MLE. DDO can be performed iteratively in a self-play manner for progressive model refinement, with each round requiring less than 1% of pretraining epochs. Our experiments demonstrate the effectiveness of DDO by significantly advancing the previous SOTA diffusion model EDM, reducing FID scores from 1.79/1.58/1.96 to new records of 1.30/0.97/1.26 on CIFAR-10/ImageNet-64/ImageNet 512x512 datasets without any guidance mechanisms, and by consistently improving both guidance-free and CFG-enhanced FIDs of visual autoregressive models on ImageNet 256x256.

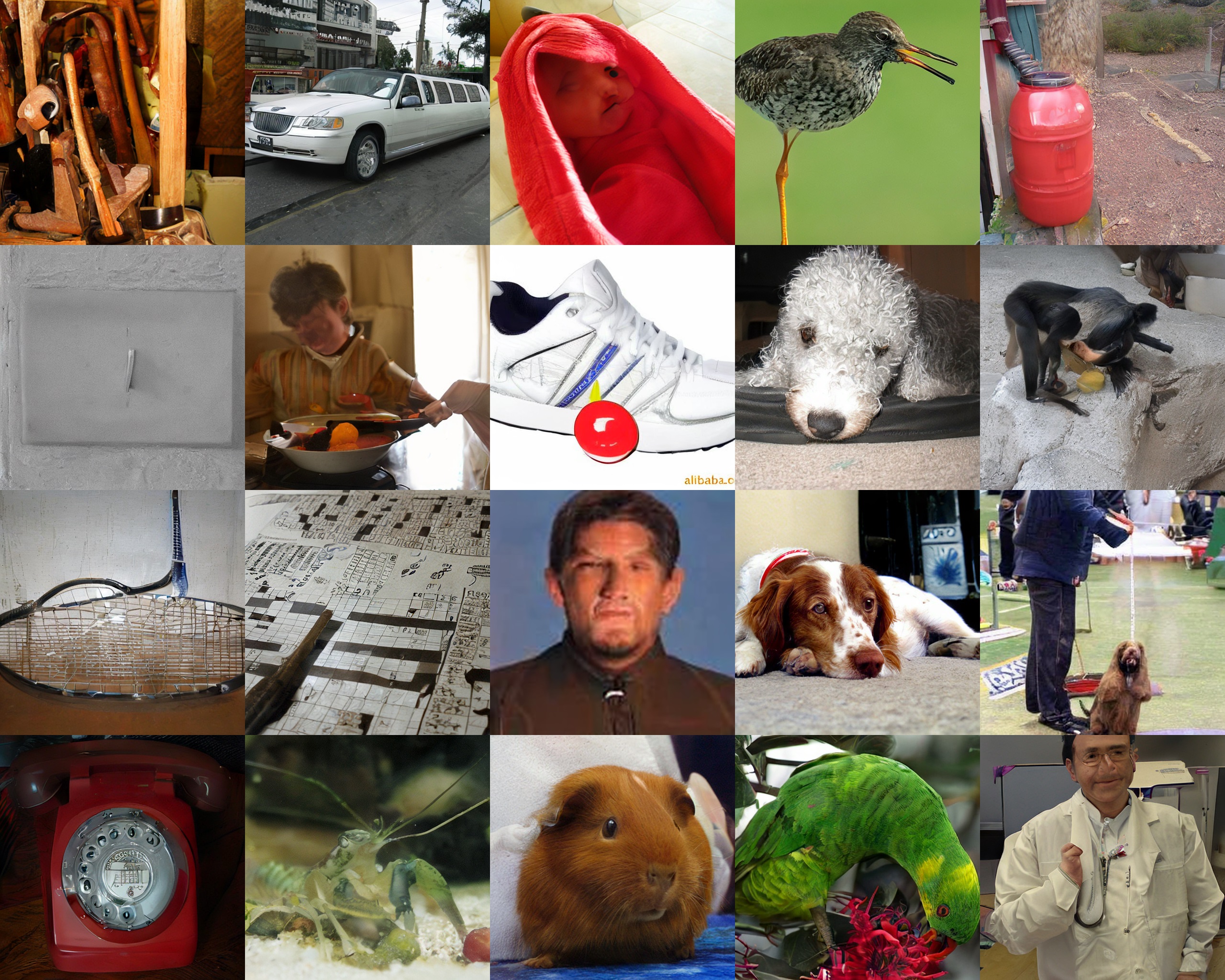

(a) EDM2-L (FID 1.96)

(b) EDM2-L+DDO (FID 1.26), sampled without CFG

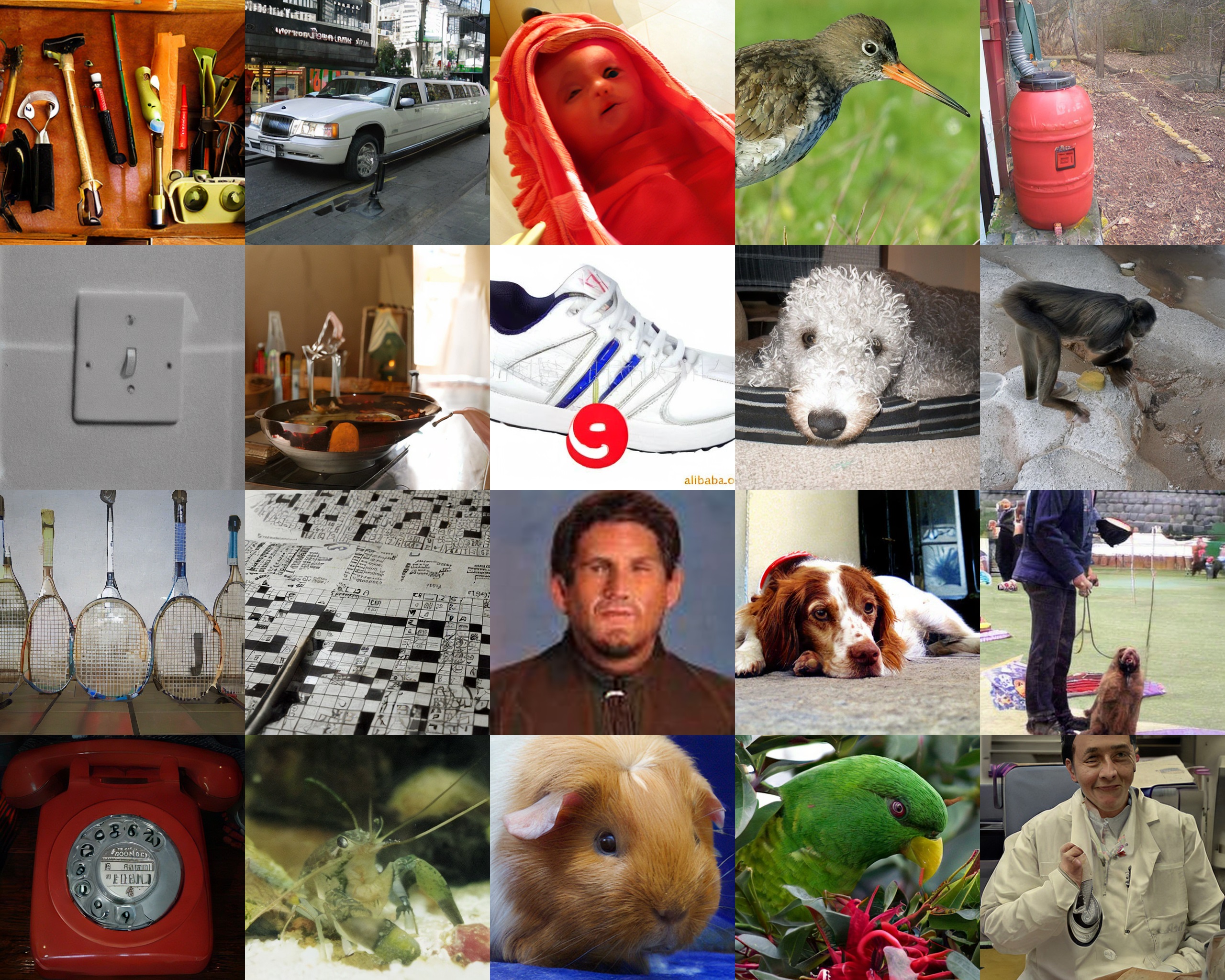

(a) VAR-d30 (FID 4.74)

(b) VAR-d30+DDO (FID 1.79), sampled without CFG

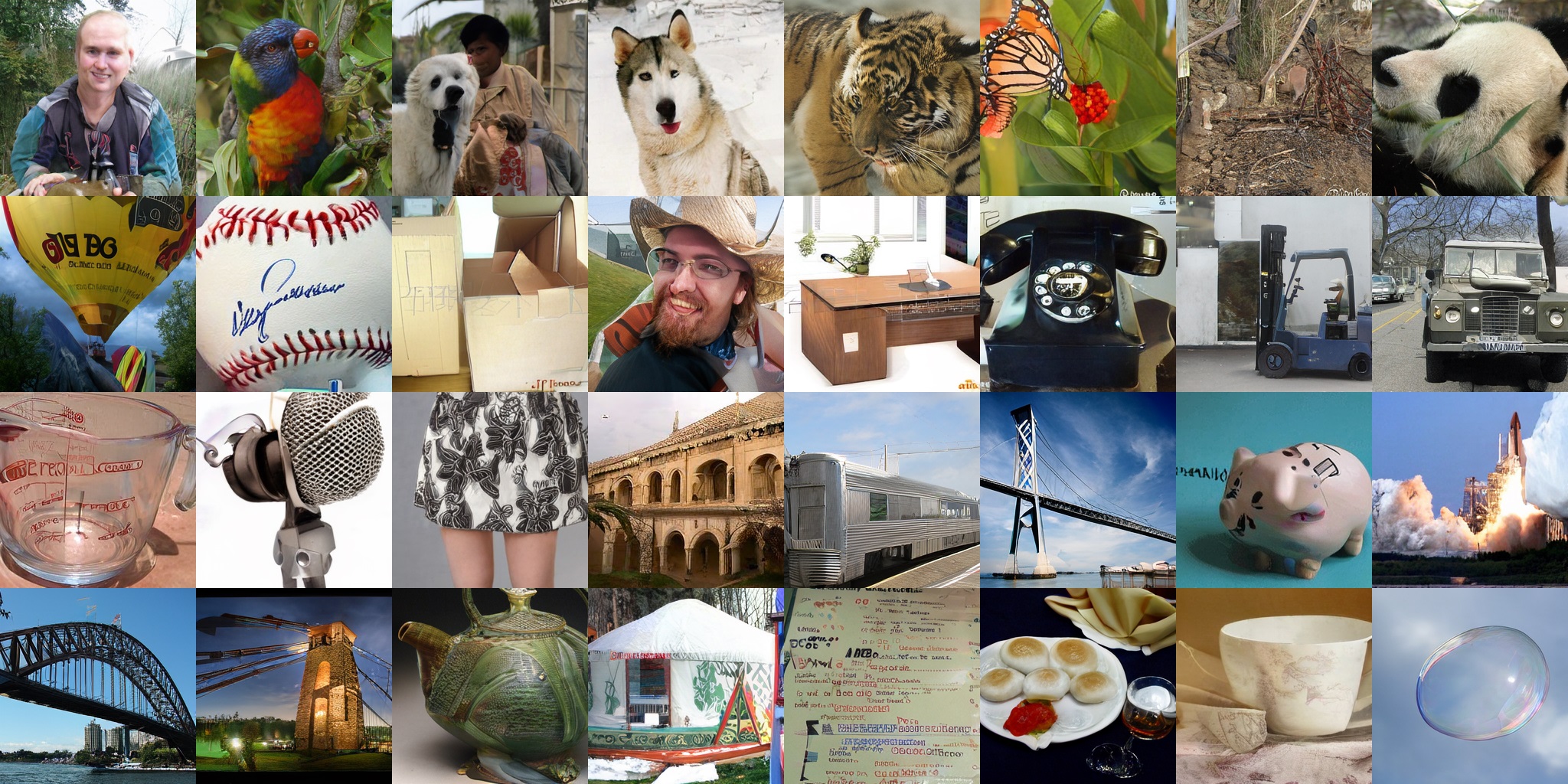

DDO Pipeline

Direct Discriminative Optimization (DDO) is an effective and efficient finetuning method for enhancing visual likelihood-based generative models, which:

- Bridges likelihood-based generative models and GANs.

- Bypasses the limitation of traditional maximum likelihood estimation (MLE) objective.

- Adopts an implicit discriminator parameterization inspired by Direct Preference Optimization (DPO), eliminating engineering complexity.

Results

DDO significantly advances previous SOTA diffusion models and visual autoregressive models: EDM/EDM2/VAR on CIFAR-10/ImageNet-64/ImageNet 512x512/ImageNet 256x256.

State-of-the-art FIDs

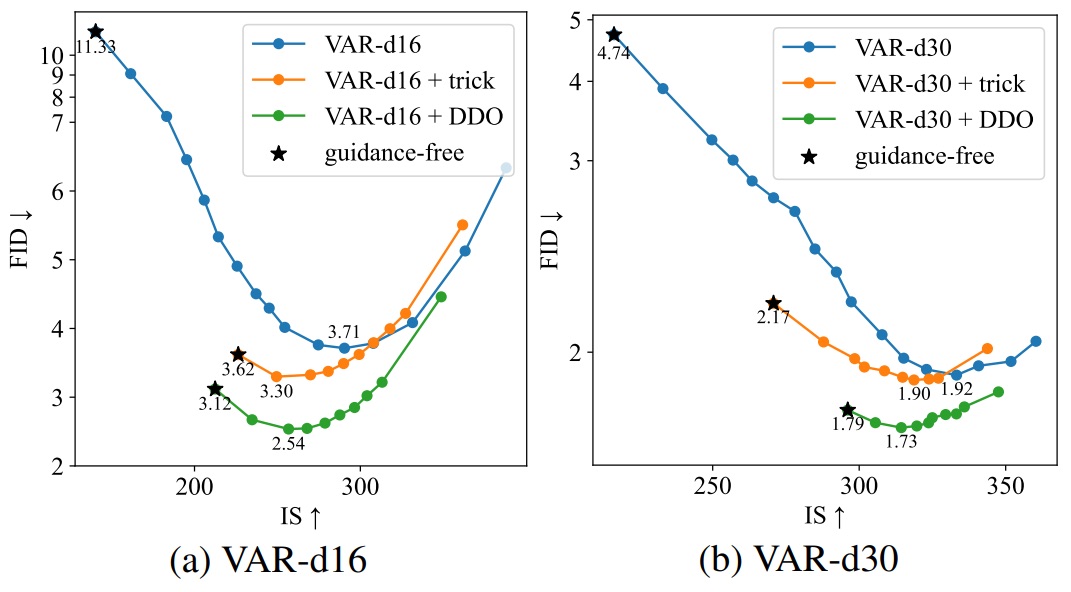

Superior FID-IS trade-off when combined with classifier-free guidance (CFG). Guidance-free performance surpasses the CFG-enhanced base model, cutting the inference cost by half.

DDO can be performed iteratively in a self-play manner for progressive model refinement, with each round requiring less than 1% of pretraining epochs. We observe steady improvement on diffusion models.

Citation

@inproceedings{zheng2025direct,

title={Direct Discriminative Optimization: Your Likelihood-Based Visual Generative Model is Secretly a GAN Discriminator},

author={Zheng, Kaiwen and Chen, Yongxin and Chen, Huayu and He, Guande and Liu, Ming-Yu and Zhu, Jun and Zhang, Qinsheng},

booktitle={International Conference on Machine Learning (ICML)},

year={2025}

}