Abstract

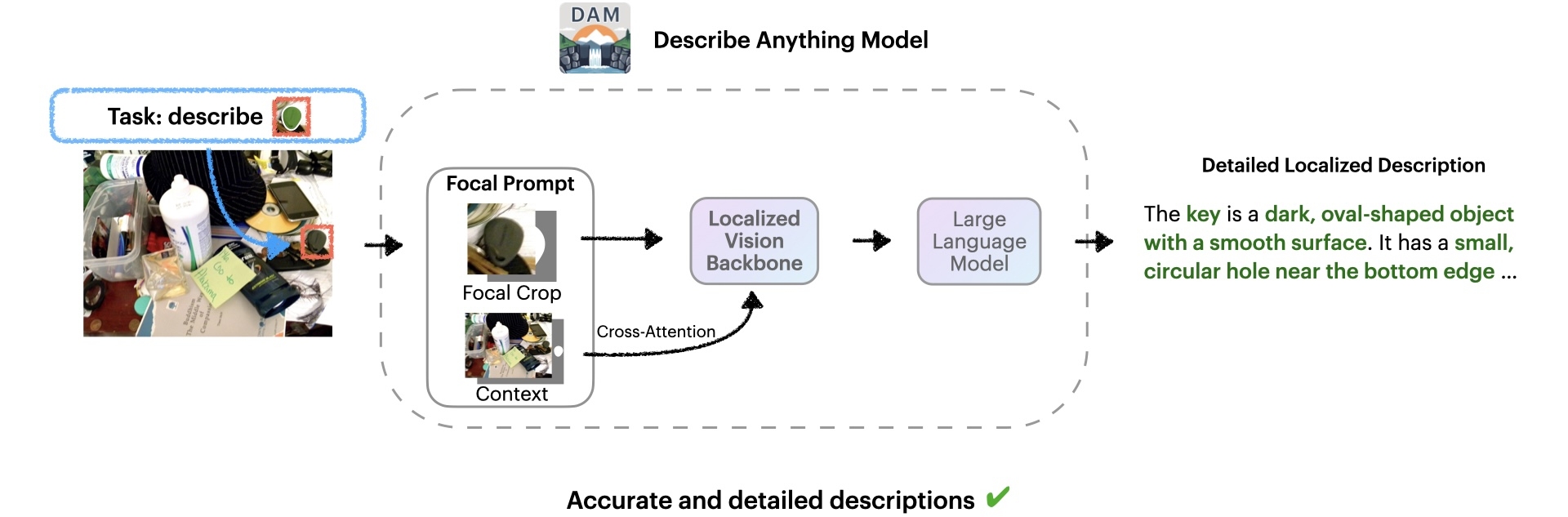

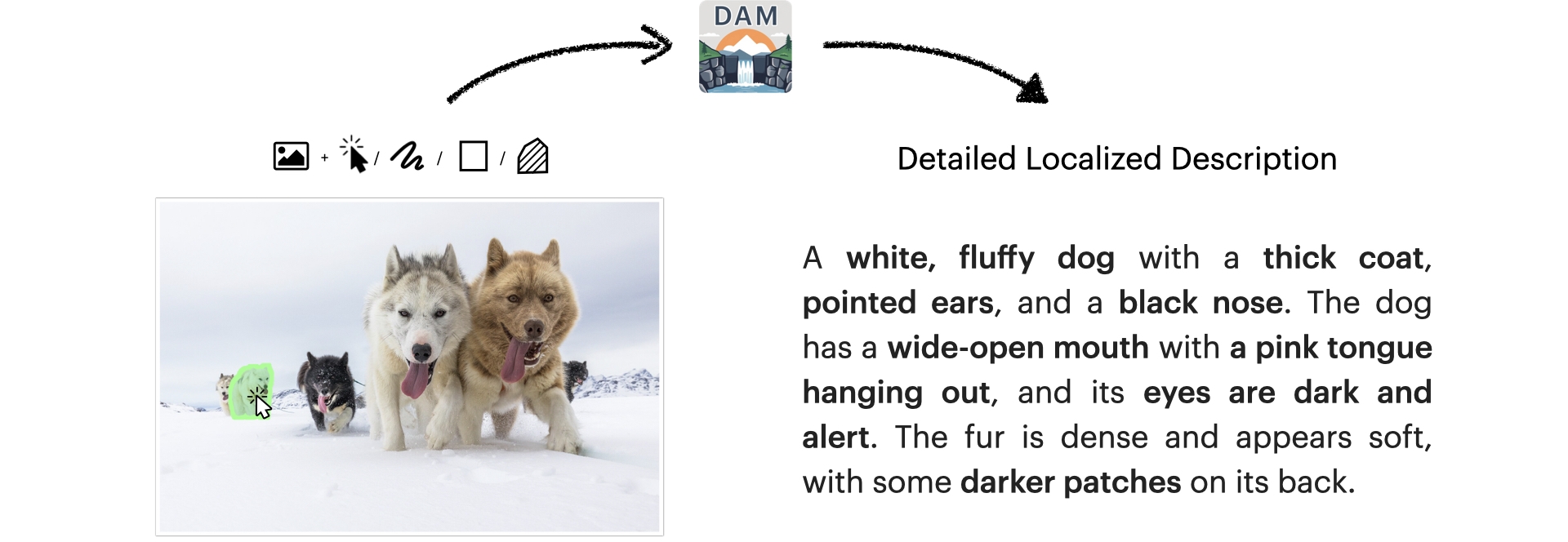

We introduce the Describe Anything Model (DAM), a model that generates detailed localized captions for user-specified regions within images and videos. This task, called Detailed Localized Captioning (DLC), is challenging because it requires both local details and global context.

DAM preserves both local details and global context through two key innovations: a focal prompt, which ensures high-resolution encoding of targeted regions, and a localized vision backbone, which integrates precise localization with its broader context. To tackle the scarcity of high-quality DLC data, we propose a Semi-supervised learning (SSL)-based Data Pipeline (DLC-SDP). DLC-SDP starts with existing segmentation datasets and expands to unlabeled web images using SSL. We introduce DLC-Bench, a benchmark designed to evaluate DLC without relying on reference captions. DAM sets new state-of-the-art on 7 benchmarks spanning keyword-level, phrase-level, and detailed multi-sentence localized image and video captioning.

Method & Innovation

Architecture

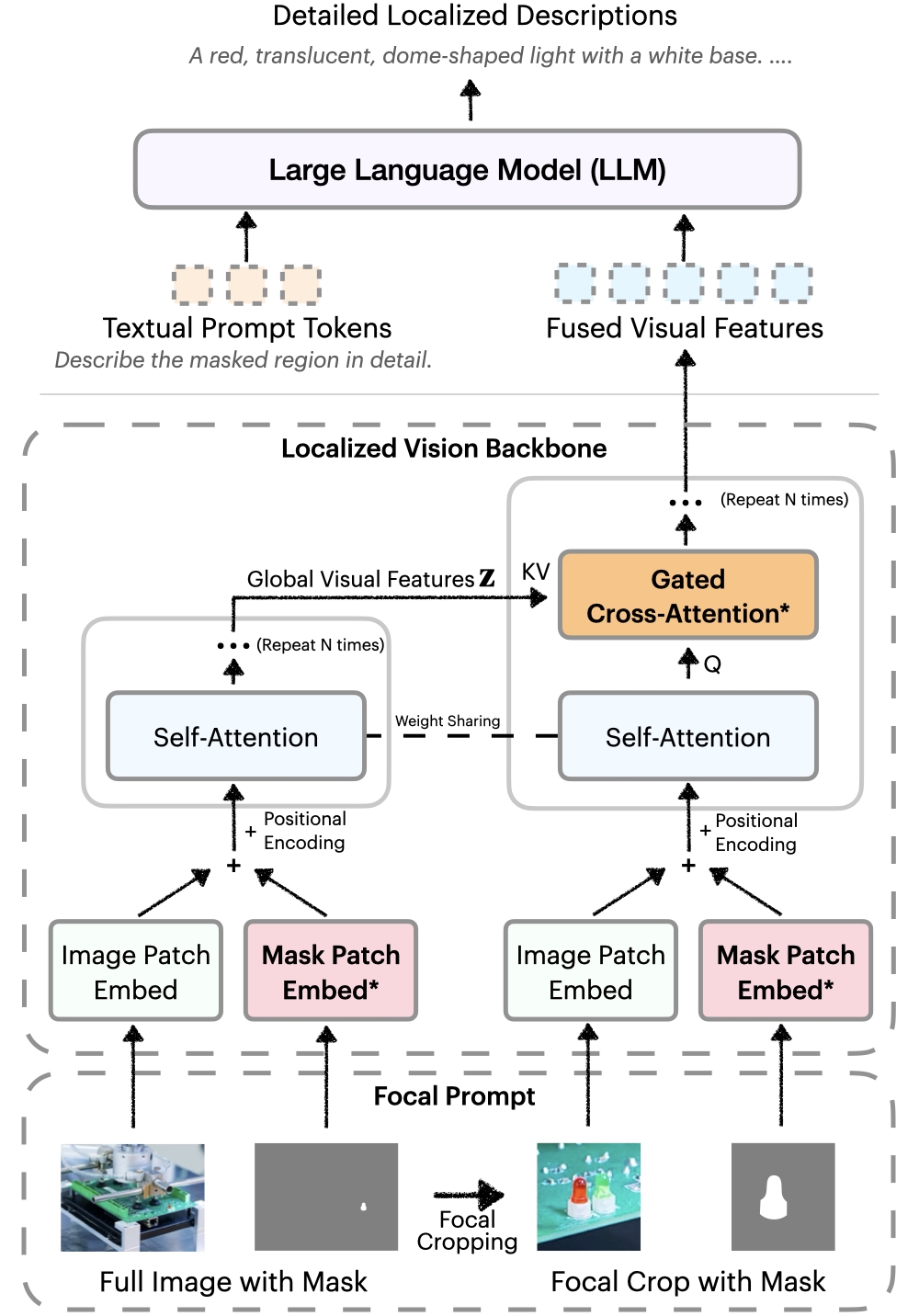

DAM introduces two key innovations:

- Focal Prompt: Ensures high-resolution encoding of targeted regions while maintaining global context

- Localized Vision Backbone: Integrates precise localization with broader scene understanding

Image credit: Objects365 Dataset (CC BY 4.0)

We introduce a localized vision backbone that integrates global and focal features. Images and masks are aligned spatially, and gated cross-attention layers fuse detailed local cues with global context. New parameters are initialized to zero, preserving pre-trained capabilities. This design yields richer, more context-aware descriptions.

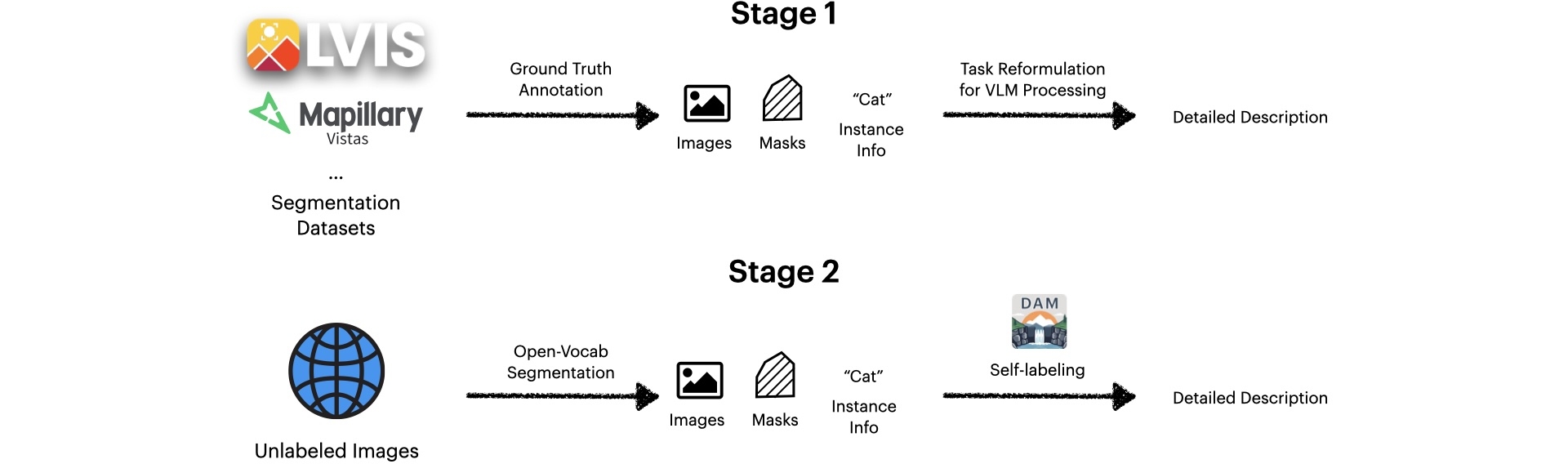

SSL Data Pipeline

Our two-stage pipeline addresses data scarcity:

- Stage 1: Expands keywords from segmentation datasets into detailed captions

- Stage 2: Leverages unlabeled web images through self-training technique as a form of semi-supervised learning

- Uses LLM to generate multi-granular descriptions

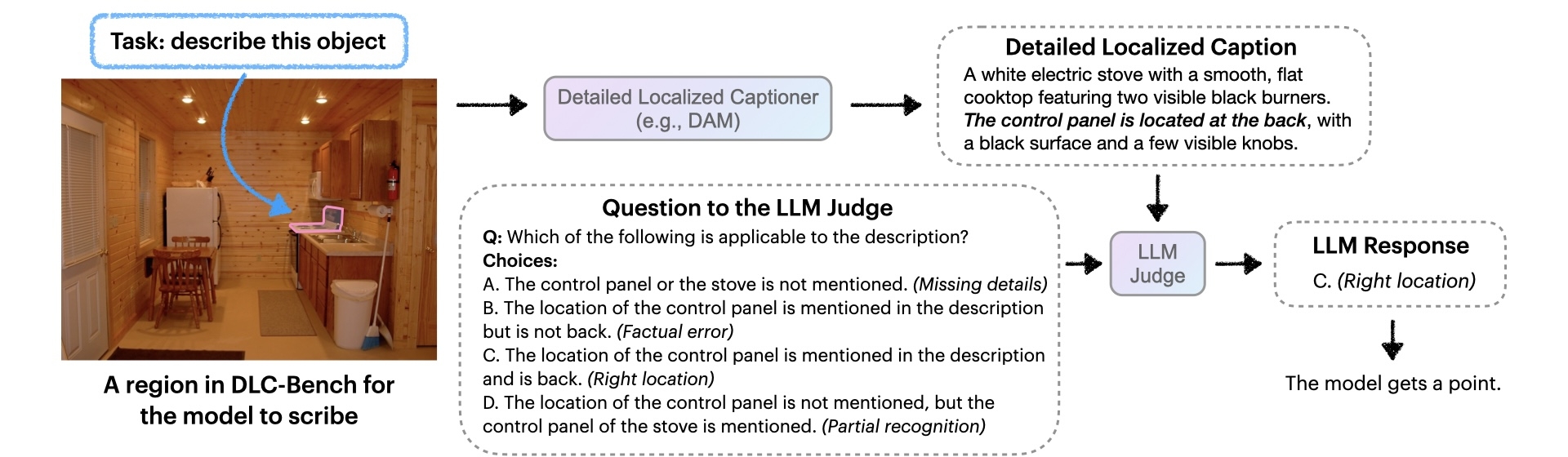

DLC-Bench

DLC-Bench evaluates detailed localized captions based on predefined positive and negative attributes for each region, eliminating the need for comprehensive reference captions. This provides more accurate and flexible evaluation of model performance.

Results & Capabilities

Detailed Localized Captioning

DLC involves generating comprehensive descriptions of specific regions within images and videos. Unlike traditional image captioning, DLC requires:

- Precise localization of target regions

- Detailed descriptions of visual attributes

- Integration of both local details and global context

- For videos: tracking and describing temporal changes

Describe Anything Model (DAM) performs detailed localized captioning (DLC), generating detailed and localized descriptions for user-specified regions within images. DAM accepts various user inputs for region specification, including clicks, scribbles, boxes, and masks. (Image credit: Markus Trienke (CC BY-SA 2.0))

DLC extends naturally to videos by describing how a specified region's appearance and context change over time. Models must track the target across frames, capturing evolving attributes, interactions, and subtle transformations.

Describe Anything Model (DAM) can also perform detailed localized video captioning, describing how a specified region changes over time. For localized video descriptions, specifying the region on only a single frame. See our paper for full descriptions. (Video credit: MOSE Dataset (CC BY-NC-SA 4.0))

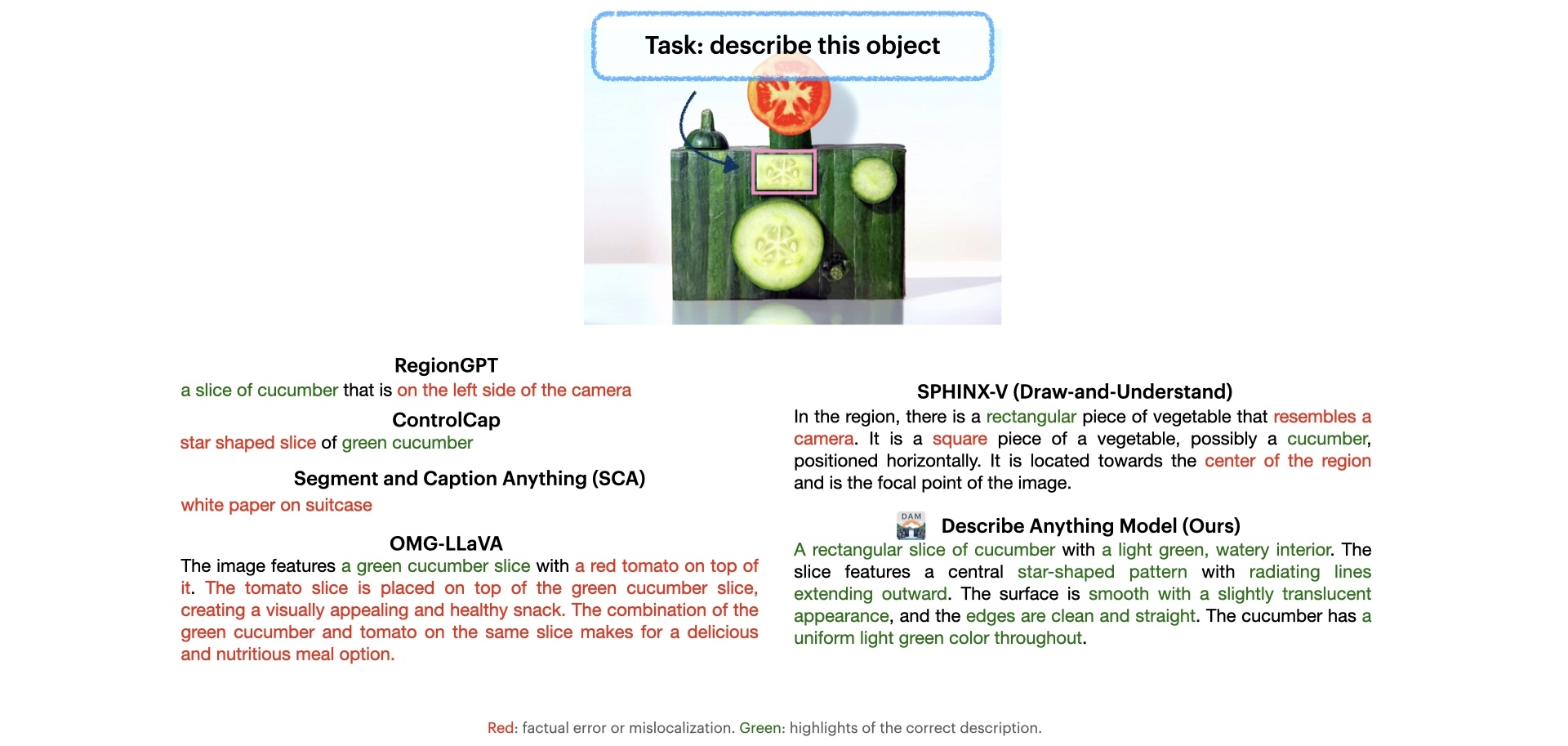

Highly Detailed Captioning

Our method excels at producing detailed descriptions of objects in both images and videos. By balancing the clarity of a focal region with global context, the model can highlight subtle features—like intricate patterns or changing textures—far beyond what general image-level captioning provides.

Compared to prior works, the description from our Describe Anything Model (DAM) is more detailed and accurate. (Image credit: SAM Materials (CC BY-SA 4.0))

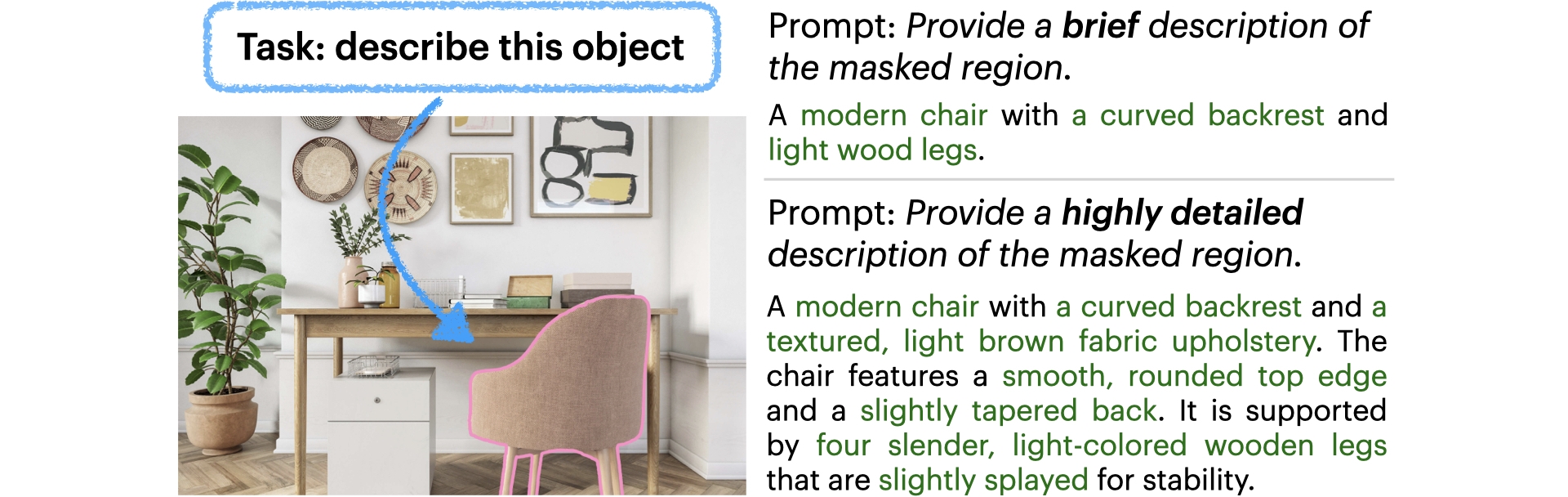

Instruction-controlled Captioning

Users can guide our model to produce descriptions of varying detail and style. Whether a brief summary or a long, intricate narrative is needed, the model can adapt its output. This flexibility benefits diverse use cases, from rapid labeling tasks to in-depth expert analyses.

Describe Anything Model (DAM) can generate descriptions of varying detail and style according to user instructions. (Image credit: SAM Materials (CC BY-SA 4.0))

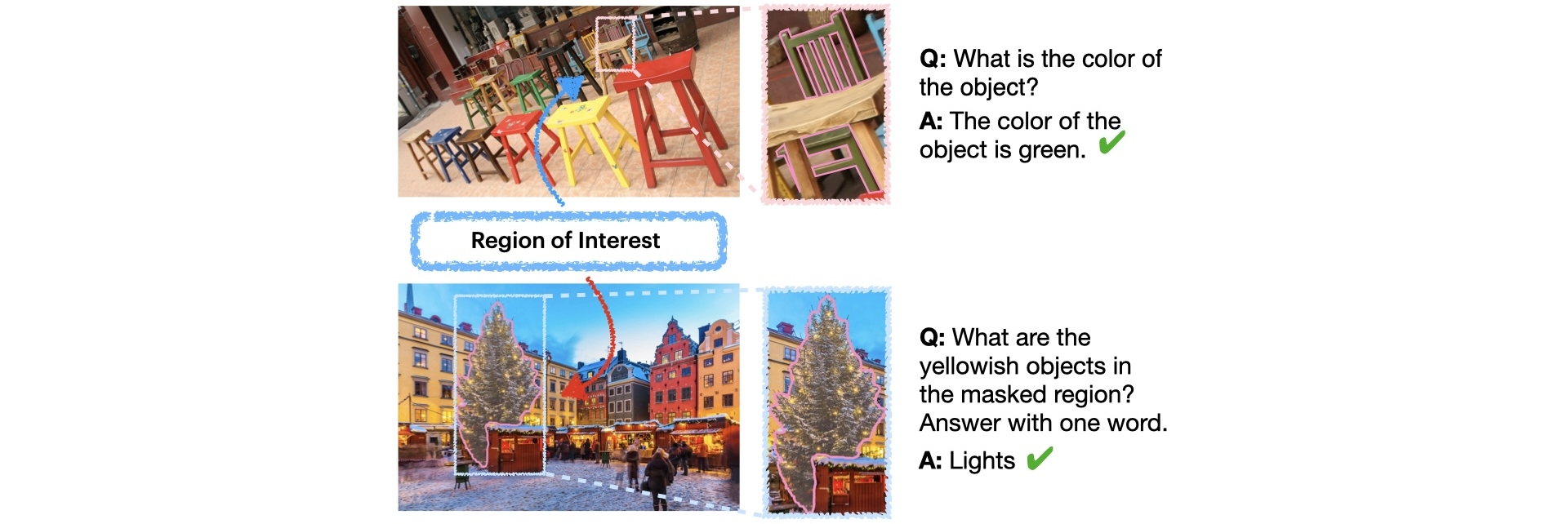

Zero-shot Regional QA

Beyond descriptions, our model can answer questions about a specified region without extra training data. Users can ask about the region's attributes, and the model draws on its localized understanding to provide accurate, context-driven answers. This capability enhances natural, interactive use cases.

Comparison

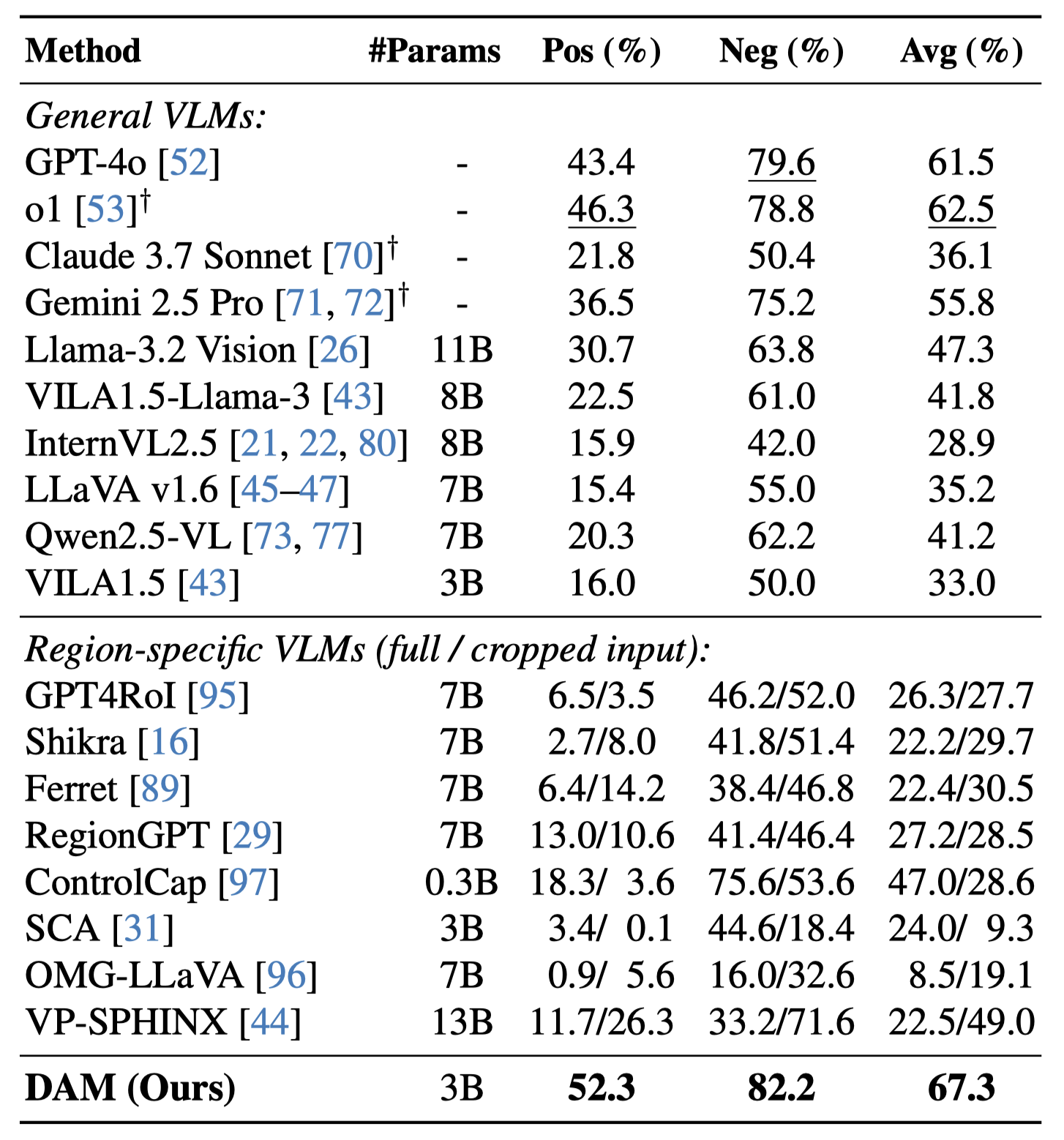

On DLC-Bench, our model outperforms existing solutions by producing more detailed and accurate localized descriptions with less hallucination. It surpasses models trained for general image-level tasks and those designed for localized reasoning, setting a new standard for detailed, context-rich captioning.

Our model outperforms existing solutions on DLC-Bench. See our paper for additional results.

Citation

@inproceedings{lian2025describe,

title={Describe Anything: Detailed Localized Image and Video Captioning},

author={Lian, Long and Ding, Yifan and Ge, Yunhao and Liu, Sifei and Mao, Hanzi and Li, Boyi and Pavone, Marco and Liu, Ming-Yu and Darrell, Trevor and Yala, Adam and Cui, Yin},

booktitle={IEEE/CVF International Conference on Computer Vision (ICCV)},

year={2025}

}