Abstract

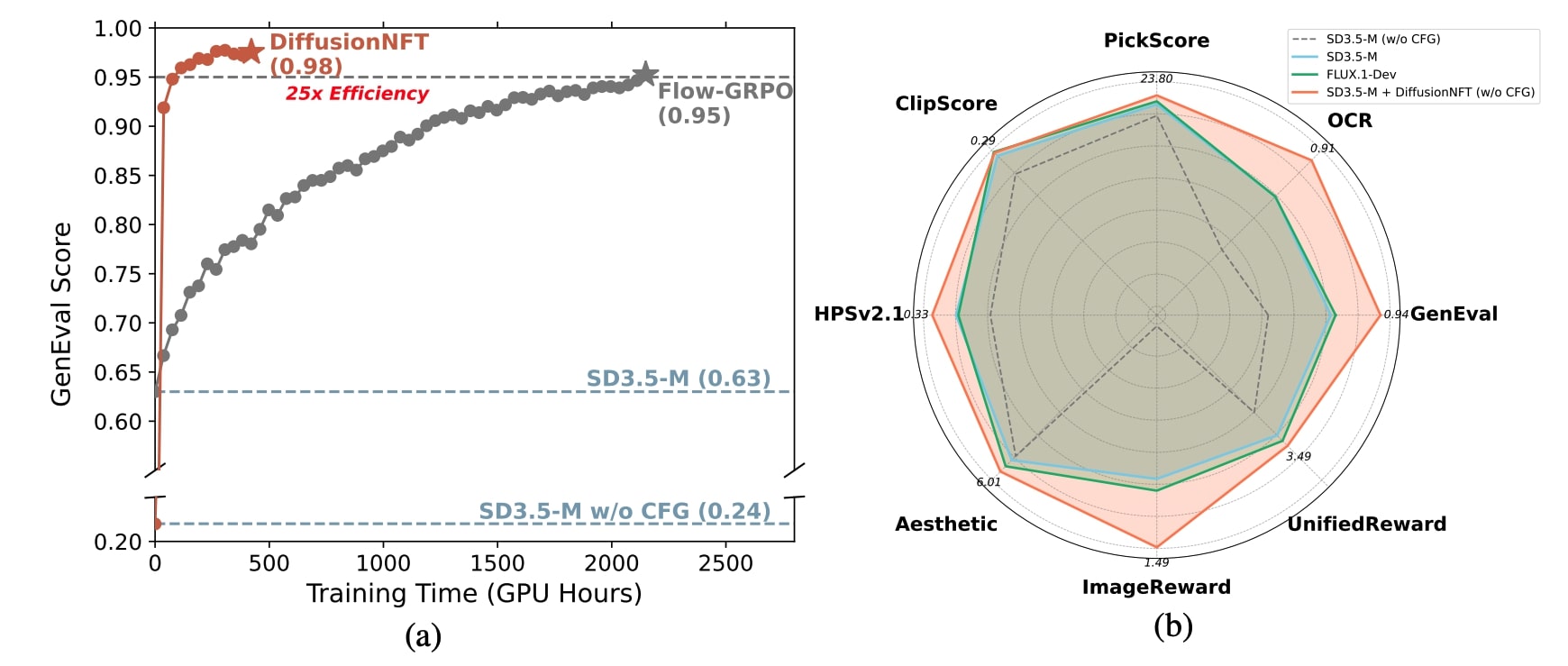

Online reinforcement learning (RL) has been central to post-training language models, but its extension to diffusion models remains challenging due to intractable likelihoods. Recent works discretize the reverse sampling process to enable GRPO-style training, yet they inherit fundamental drawbacks, including solver restrictions, forward–reverse inconsistency, and complicated integration with classifier-free guidance (CFG). We introduce Diffusion Negative-aware FineTuning (DiffusionNFT), a new online RL paradigm that optimizes diffusion models directly on the forward process via flow matching. DiffusionNFT contrasts positive and negative generations to define an implicit policy improvement direction, naturally incorporating reinforcement signals into the supervised learning objective. This formulation enables training with arbitrary black-box solvers, eliminates the need for likelihood estimation, and requires only clean images rather than sampling trajectories for policy optimization. DiffusionNFT is up to 25× more efficient than FlowGRPO in head-to-head comparisons, while being CFG-free. For instance, DiffusionNFT improves the GenEval score from 0.24 to 0.98 within 1k steps, while FlowGRPO achieves 0.95 with over 5k steps and additional CFG employment. By leveraging multiple reward models, DiffusionNFT significantly boosts the performance of SD3.5-Medium in every benchmark tested.

TL;DR

We propose DiffusionNFT, a new online diffusion RL paradigm that optimizes directly on the forward diffusion process.

- DiffusionNFT is up to 25x more efficient than FlowGRPO, achieving GenEval score 0.98 in ~1k steps without applying CFG.

- By leveraging multiple reward models, DiffusionNFT significantly boosts the performance of SD3.5-Medium in every benchmark tested, while being fully CFG-free.

- DiffusionNFT enables training with arbitrary black-box solvers, rather than relying on first-order SDE solvers.

- DiffusionNFT requires only clean images and their rewards for policy optimization, fully decoupling sampling and training strategy, bypassing the need to store the entire sampling trajectory.

DiffusionNFT introduces the Negative-aware Fine-Tuning (NFT) paradigm into the diffusion domain, providing a general, highly unified, and natively off-policy RL recipe across various modalities.

Performance of DiffusionNFT. DiffusionNFT does not require usage of CFG throughout training.

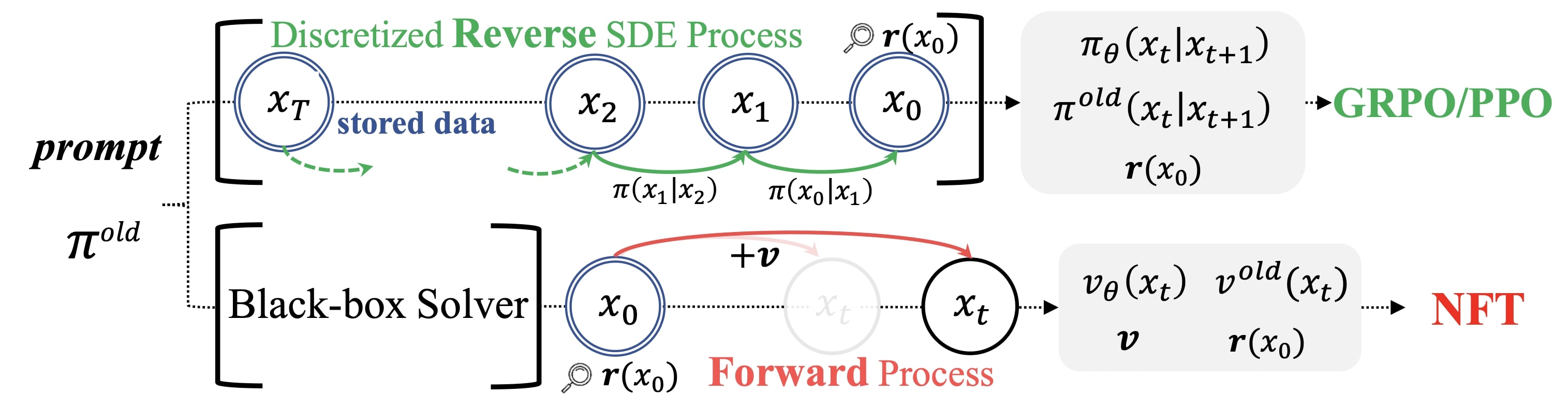

Comparison between Forward-Process RL (NFT) and Reverse-Process RL (GRPO). NFT allows using any solvers and does not require storing the whole sampling trajectory for optimization.

Method

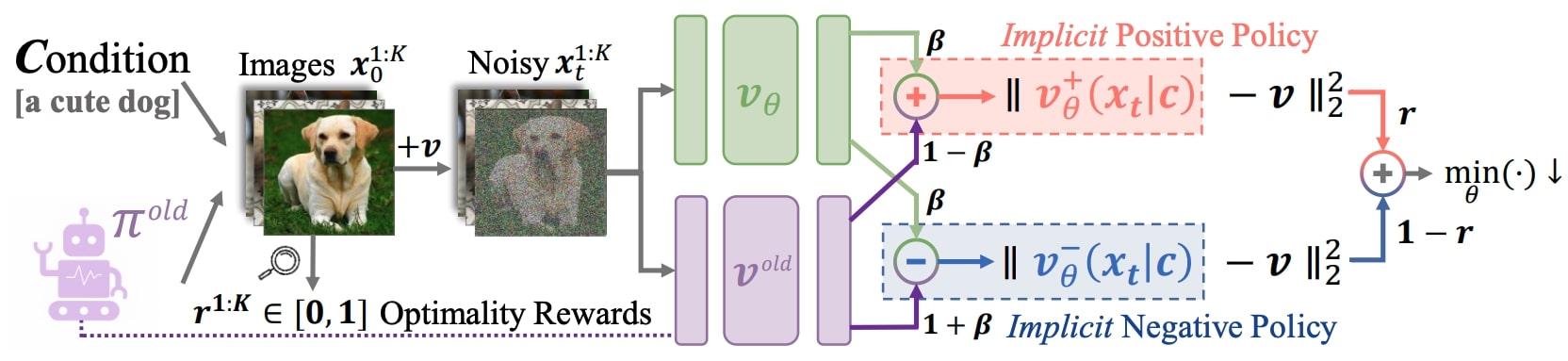

DiffusionNFT workflow.

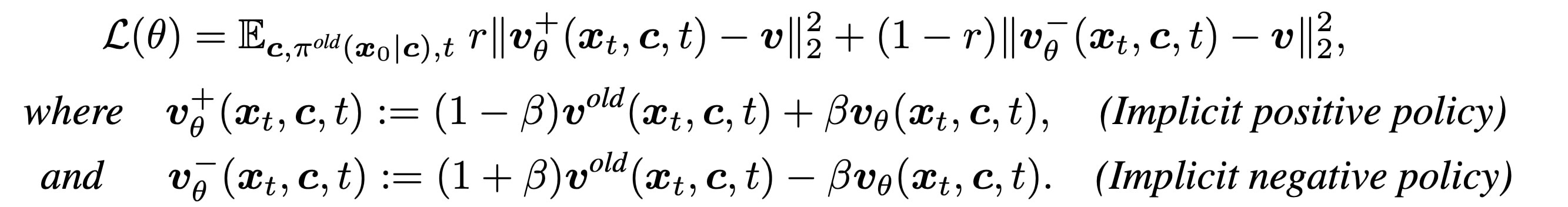

Training Objective

Training objective.

How It Works

Instead of applying Policy Gradient on the reverse sampling process, DiffusionNFT adopts a forward-process optimization approach that trains on both positive and negative online data simultaneously via standard flow matching. Intuitively, DiffusionNFT jointly optimizes two dual diffusion objectives — respectively on positive (r=1) and negative (r=0) branches. Rather than training two separate models, it uses an implicit parameterization technique that directly optimizes a single target policy.

Why It Works

- Forward Consistency: Unlike reverse-process RL methods, DiffusionNFT preserves adherence to the forward diffusion process, preventing model degradation into cascaded Gaussians.

- Solver Flexibility: Fully decouples training and sampling — use any black-box solver for data collection, not just first-order SDE samplers.

- Likelihood-Free: No need for costly likelihood approximation — bypasses systematic estimation bias entirely.

- Memory Efficient: Only requires clean images and rewards for training, no need to store entire sampling trajectories.

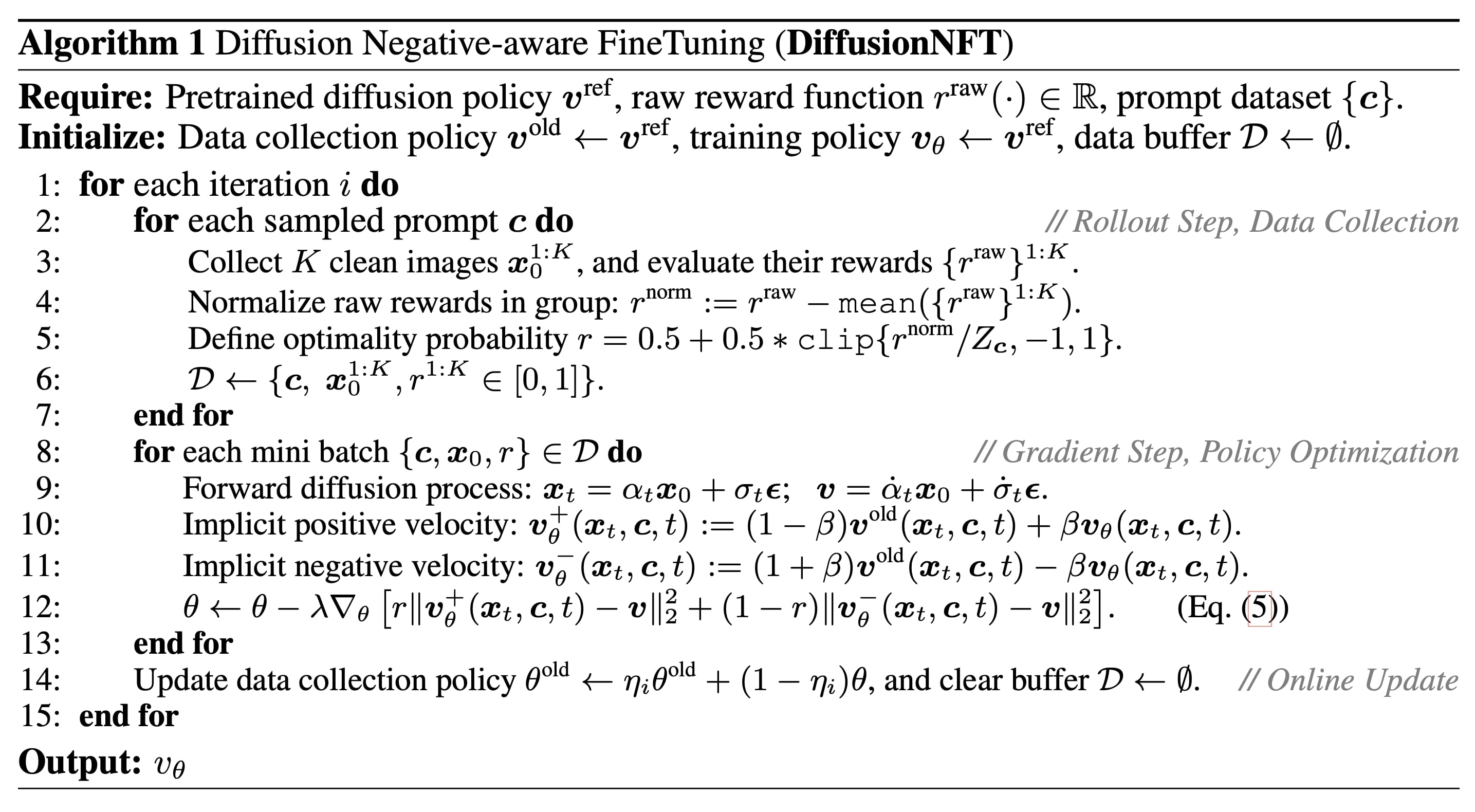

Pseudocode

Results

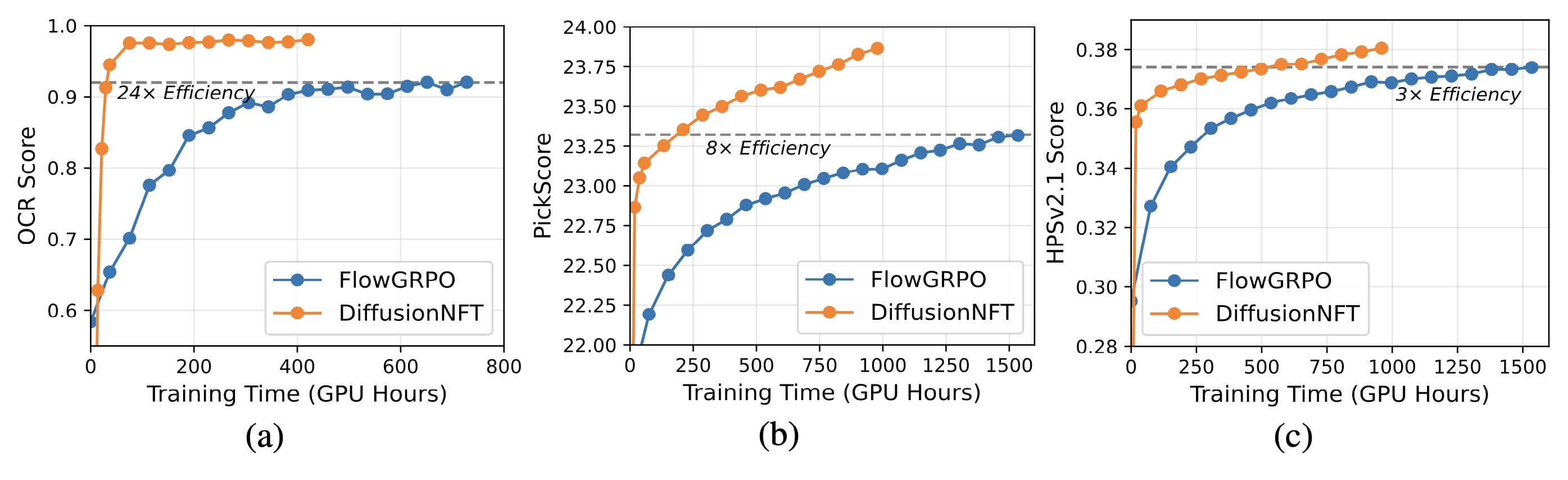

Head-to-head comparison with FlowGRPO on single rewards. DiffusionNFT is 3x to 25x more efficient. It also enjoys higher final scores and CFG-free formulation.

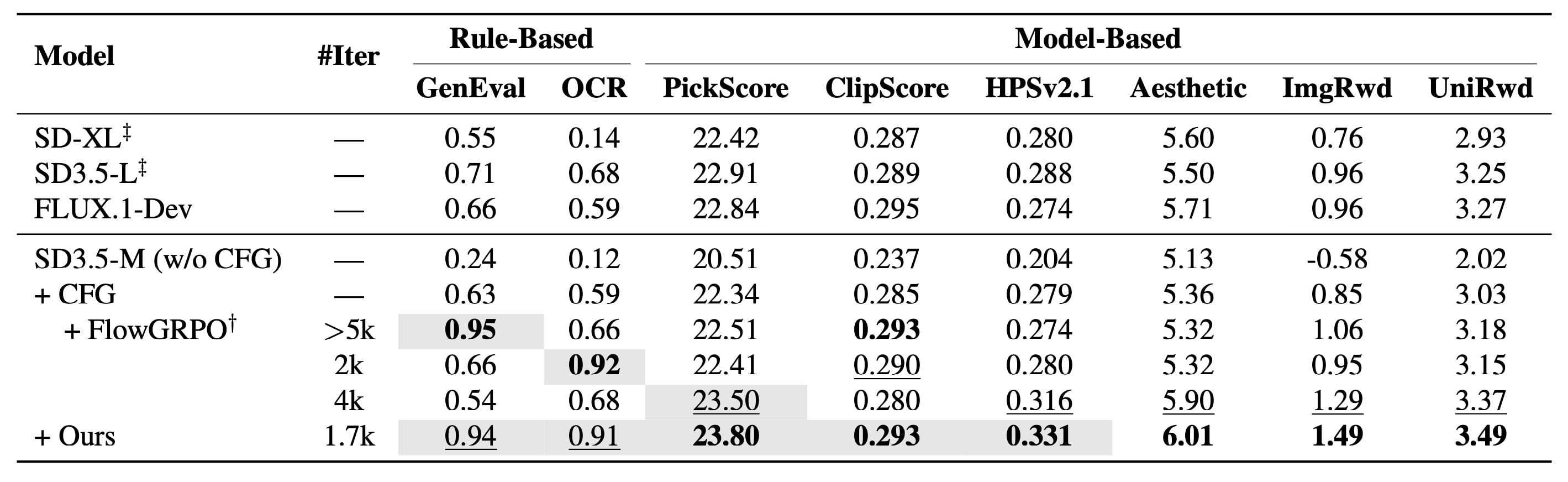

Multi-reward joint training results. Our CFG-free model surpasses CFG-based larger models and matches FlowGRPO across both in-domain and out-of-domain metrics.

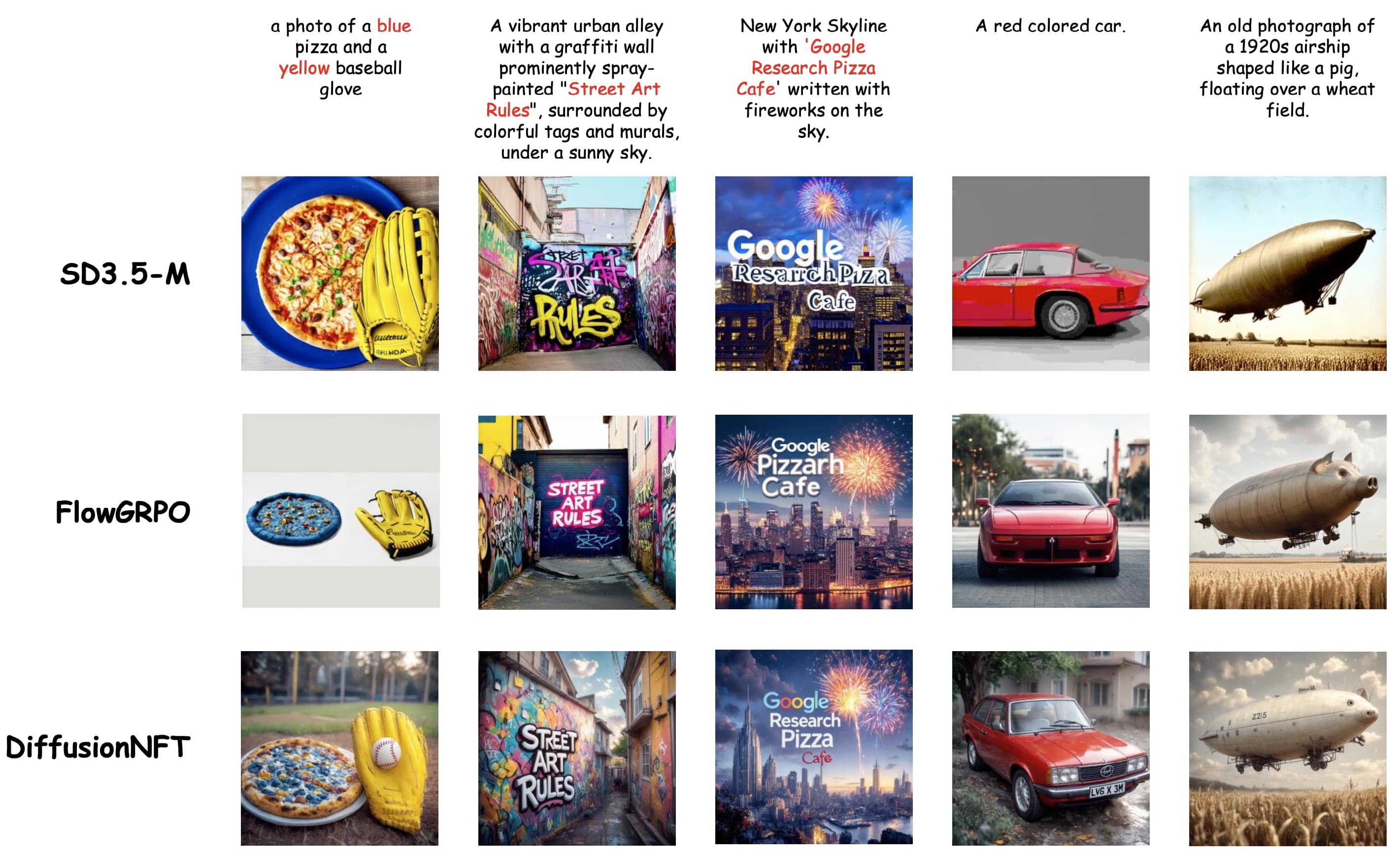

Qualitative comparison between DiffusionNFT and FlowGRPO.

Citation

@inproceedings{zheng2025diffusionnft,

title={DiffusionNFT: Online Diffusion Reinforcement with Forward Process},

author={Zheng, Kaiwen and Chen, Huayu and Ye, Haotian and Wang, Haoxiang and Zhang, Qinsheng and Jiang, Kai and Su, Hang and Ermon, Stefano and Zhu, Jun and Liu, Ming-Yu},

booktitle={International Conference on Learning Representations (ICLR)},

year={2026}

}