Overview

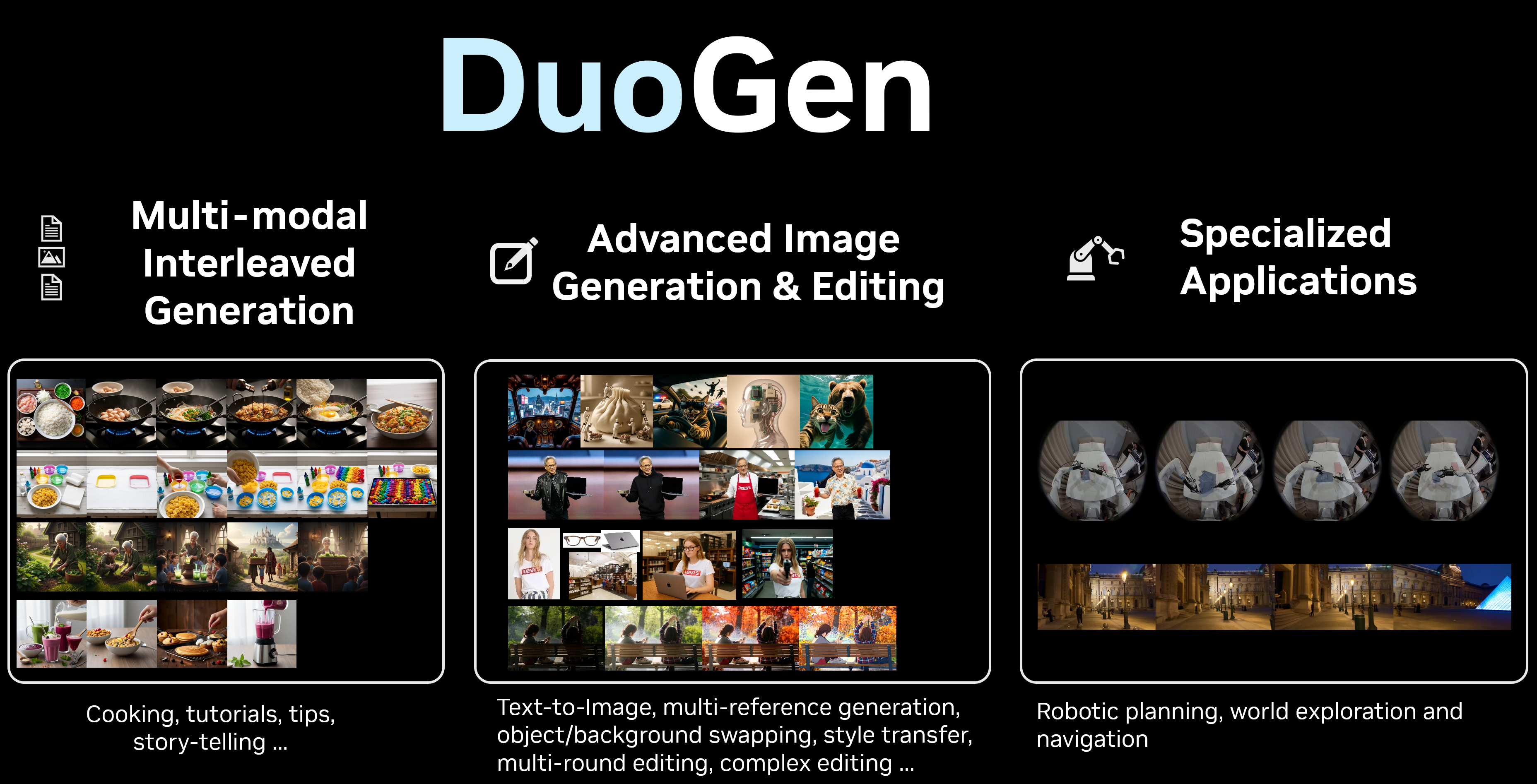

We present DuoGen, a multimodal model designed to automatically switch between modalities to generate coherent, interleaved image-text sequences. DuoGen enables complex tasks that require synchronized text and visuals. These applications range from creating illustrated tutorials and narratives to robotic manipulation and autonomous navigation.

Key Features

- Novel Architecture: DuoGen combines the visual understanding of a pretrained Multimodal LLM (MLLM) with the generative power of a Diffusion Transformer (DiT). This avoids costly unimodal pretraining and allows for flexible base model selection.

- High-Quality Data: We support the model with a large-scale instruction-tuning dataset, curated by combining rewritten multimodal web content with diverse, synthetic examples of everyday scenarios.

- Decoupled Training: Our two-stage strategy first instruction-tunes the MLLM with high-quality interleaved conversation data, then aligns the DiT with the MLLM using large-scale interleaved context from different sources.

Model Capabilities

Citation

@inproceedings{duogen2026duogen,

title={DuoGen: Towards General Purpose Interleaved Multimodal Generation},

author={Shi, Min and Zeng, Xiaohui and Huang, Jiannan and Cui, Yin and Ferroni, Francesco and Li, Jialuo and Pachori, Shubham and Li, Zhaoshuo and Balaji, Yogesh and Wang, Haoxiang and Lin, Tsung-Yi and Fu, Xiao and Zhao, Yue and Chen, Chieh-Yun and Liu, Ming-Yu and Shi, Humphrey},

booktitle={IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

year={2026}

}