Abstract

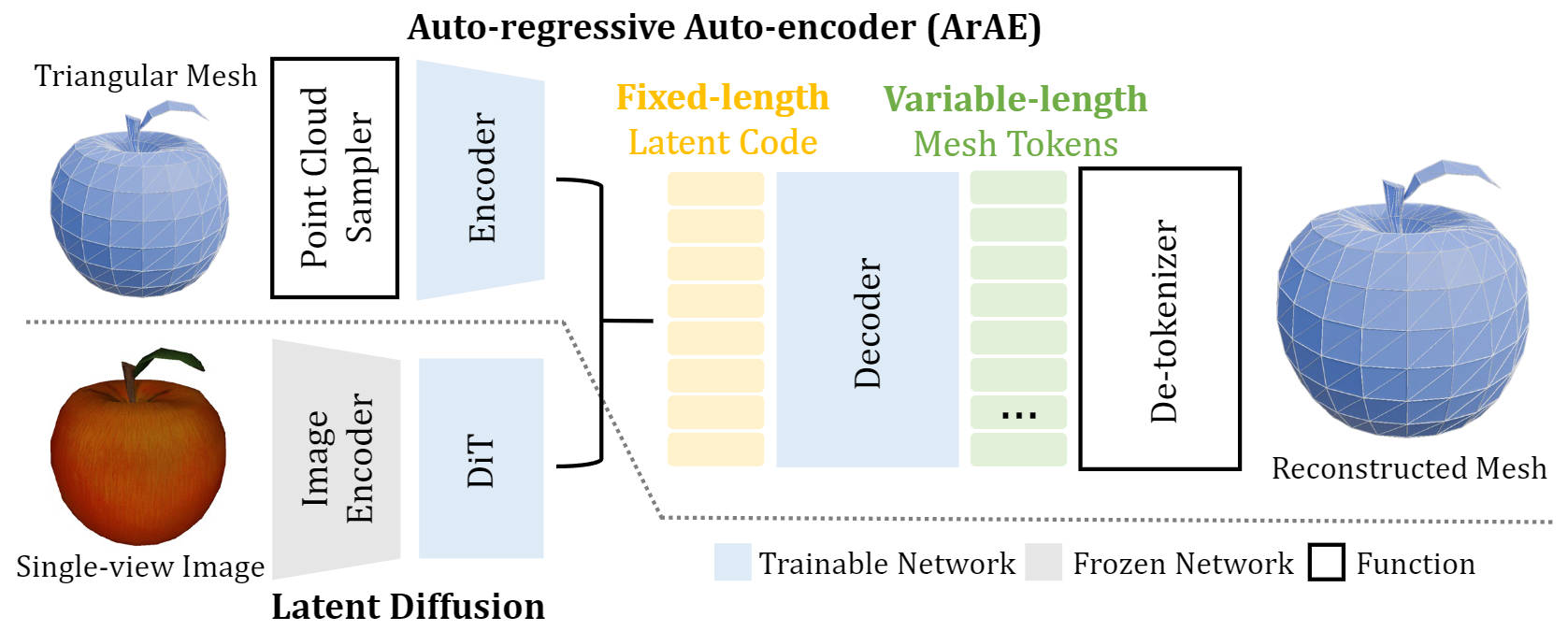

Current auto-regressive mesh generation methods suffer from issues such as incompleteness, insufficient detail, and poor generalization. In this paper, we propose an Auto-regressive Auto-encoder (ArAE) model capable of generating high-quality 3D meshes with up to 4,000 faces at a spatial resolution of 5123. We introduce a novel mesh tokenization algorithm that efficiently compresses triangular meshes into 1D token sequences, significantly enhancing training efficiency. Furthermore, our model compresses variable-length triangular meshes into a fixed-length latent space, enabling training latent diffusion models for better generalization. Extensive experiments demonstrate the superior quality, diversity, and generalization capabilities of our model in both point cloud and image-conditioned mesh generation tasks.

Pipeline

Mesh Tokenizer

Tokenization

Our tokenizer uses a modified EdgeBreaker algorithm to traverse and sequentialize a triangular mesh. The colors of triangles denote different face tokens: L (visit left), R (visit right), E (end of sequence).

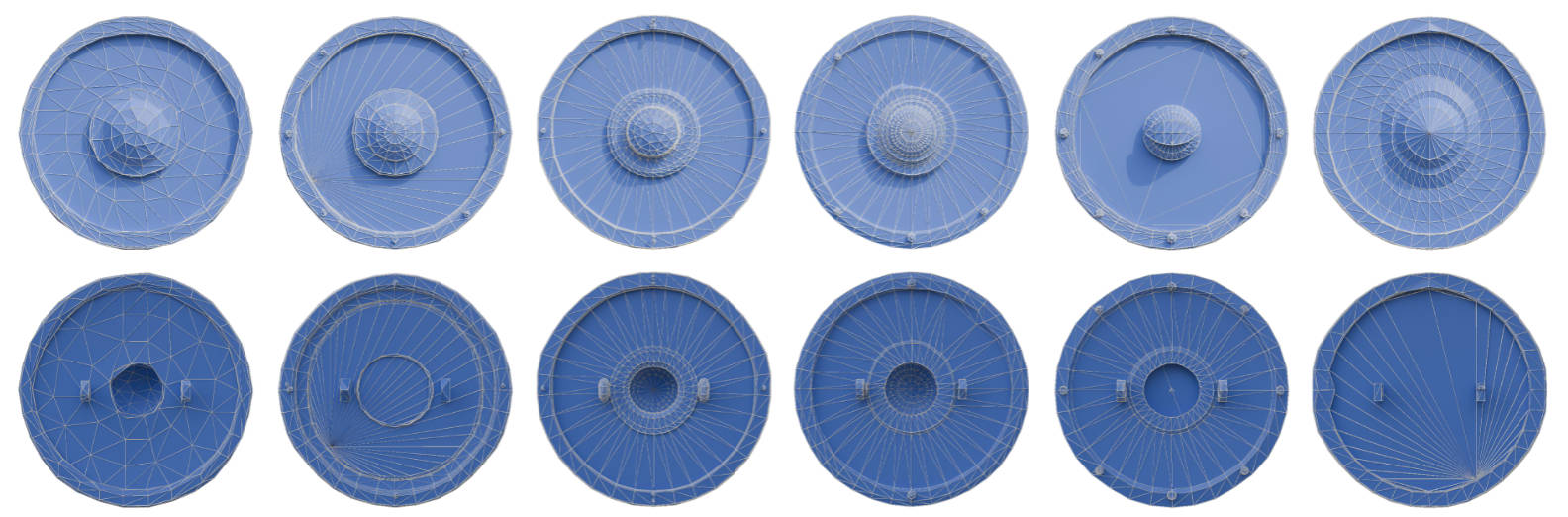

De-tokenization

We can de-tokenize our generated mesh token sequences to triangular meshes. Here are some visualizations of the generation progress:

Conditioned Mesh Generation

PointCloud-Conditioned

Drag with left mouse button to move the camera, right mouse button to move the object.

More Results

Image-Conditioned

Drag with left mouse button to move the camera, right mouse button to move the object.

More Results

Diversity of Generation

Given the same input condition (point cloud) but different random seeds, our model can generate diverse meshes:

Click the image to view meshes in 3D.

Citation

@inproceedings{tang2025edgerunner,

title={EdgeRunner: Auto-regressive Auto-encoder for Artistic Mesh Generation},

author={Tang, Jiaxiang and Li, Zhaoshuo and Hao, Zekun and Liu, Xian and Zeng, Gang and Liu, Ming-Yu and Zhang, Qinsheng},

booktitle={International Conference on Learning Representations (ICLR)},

year={2025}

}