Abstract

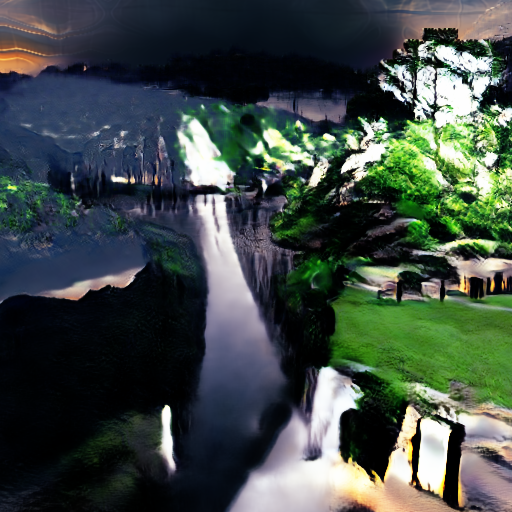

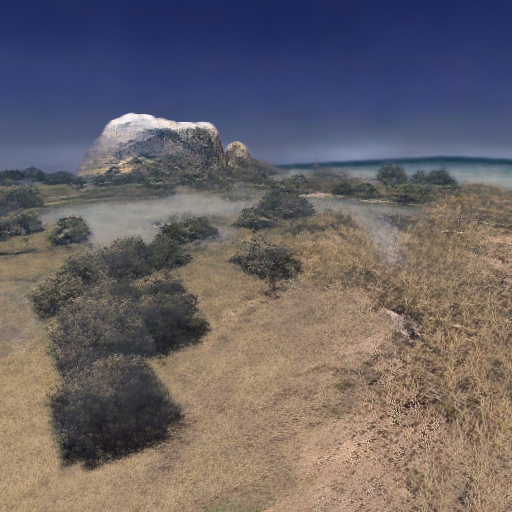

GANcraft aims at solving the world-to-world translation problem. Given a semantically labeled block world such as those from the popular game Minecraft, GANcraft is able to convert it to a new world which shares the same layout but with added photorealism. The new world can then be rendered from arbitrary viewpoints to produce images and videos that are both view-consistent and photorealistic. GANcraft simplifies the process of 3D modeling of complex landscape scenes, which will otherwise require years of expertise. GANcraft essentially turns every Minecraft player into a 3D artist!

Summary Videos

ICCV 2021 Oral Presentation Video

Previous Summary Video

Problem & Approach

The "Why don't you just use im2im translation?" Question

As the ground truth photorealistic renderings for a user-created block world simply doesn't exist, we have to train models with indirect supervision. Some existing approaches are strong candidates. For example, one can use image-to-image translation (im2im) methods such as MUNIT and SPADE, originally trained on 2D data only, to convert per-frame segmentation masks projected from the block world, to realistic looking images. One can also use wc-vid2vid, a 3D-aware method, to generate view-consistent images through 2D inpainting and 3D warping while using the voxel surfaces as the 3D geometry. These models have to be trained on translating real segmentation maps to real images due to paired training data requirements, and then used on Minecraft to real translation. As yet another alternative, one can train a NeRF-W, which learns a 3D radiance field from a non-photometric consistent, but posed and 3D consistent image collection.

Comparing the results from different methods, we can immediately notice a few issues:

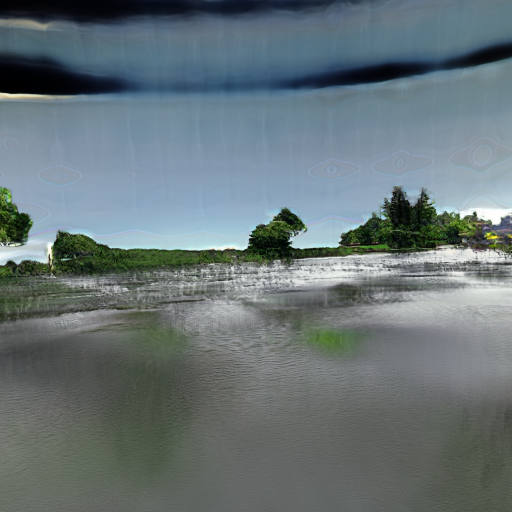

- im2im methods such as MUNIT and SPADE do not preserve viewpoint consistency, as these methods have no knowledge of the 3D geometry, and each frame is generated independently.

- wc-vid2vid produces view-consistent video, but the image quality deterorates quickly with time due to error accumulation from blocky geometry and the train-test domain gap.

- NSVF-W (our implementation of NeRF-W with added NSVF-style voxel conditioning) produces view-consistent output as well, but the result looks dull and lacks fine detail.

In the last column, we present results from GANcraft, which are both view-consistent and high-quality.

Technical Innovations

Distribution Mismatch and Pseudo-Ground Truth

Assume that we have a suitable voxel-conditional neural rendering model which is capable of representing the photorealistic world. We still need a way to train it without any ground truth posed images. Adversarial training has achieved some success in small scale, unconditional neural rendering tasks when the posed images are not available. However, for GANcraft the problem is even more challenging.

No pseudo-ground truth

W/ pseudo-ground truth

As shown in the first row, adversarial training using internet photos leads to unrealistic results, due to the complexity of the task. Producing and using pseudo-ground truths for training is one of the main contributions of our work, and significantly improves the result (second row).

Generating pseudo-ground truths

Hybrid Voxel-Conditional Neural Rendering

In GANcraft, we represent the photorealistic scene with a combination of 3D volumetric renderer and 2D image space renderer. We define a voxel-bounded neural radiance field: given a block world, we assign a learnable feature vector to every corner of the blocks, and use trilinear interpolation to define the location code at arbitrary locations within a voxel.

The complete GANcraft architecture

Capabilities

The generation process of GANcraft is conditional on a style image. During training, we use the pseudo-ground truth as the style image. During evaluation, we can control the output style by providing GANcraft with different style images. In the example below, we linearly interpolate the style code across 6 different style images.

Interpolation between multiple styles

Results

Summary

- GANcraft is a powerful tool for converting semantic block worlds to photorealistic worlds without the need for ground truth data.

- Existing methods perform poorly on the task due to the lack of viewpoint consistency and photorealism.

- GANcraft performs well in this challenging world-to-world setting where the ground truth is unavailable and the distribution mismatch between a Minecraft world and internet photos is significant.

- We introduce a new training scheme which uses pseudo-ground truth. This improves the quality of the results significantly.

- We introduce a hybrid neural rendering pipeline which is able to represent large and complex scenes efficiently.

- We are able to control the appearance of the GANcraft results by using style-conditioning images.

Citation

@inproceedings{hao2021GANcraft,

title={GANcraft: Unsupervised 3D Neural Rendering of Minecraft Worlds},

author={Hao, Zekun and Mallya, Arun and Belongie, Serge and Liu, Ming-Yu},

booktitle={IEEE/CVF International Conference on Computer Vision (ICCV)},

year={2021}

}