Abstract

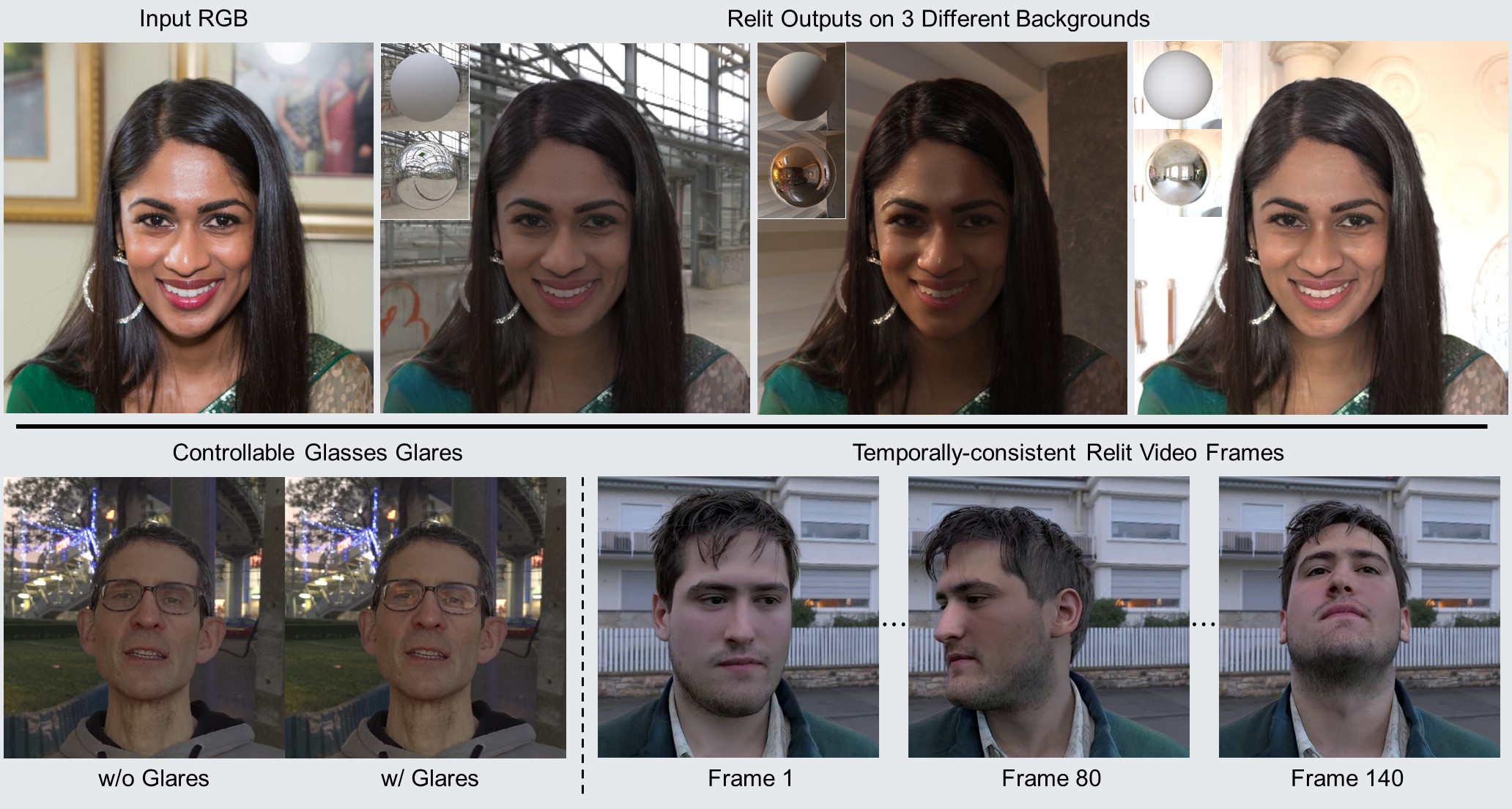

Given a portrait image of a person and an environment map of the target lighting, portrait relighting aims to re-illuminate the person in the image as if the person appeared in an environment with the target lighting. To achieve high-quality results, recent methods rely on deep learning. An effective approach is to supervise the training of deep neural networks with a high-fidelity dataset of desired input-output pairs, captured with a light stage. However, acquiring such data requires an expensive special capture rig and time-consuming efforts, limiting access to only a few resourceful laboratories. To address the limitation, we propose a new approach that can perform on par with the state-of-the-art (SOTA) relighting methods without requiring a light stage. In addition to achieving SOTA results, our approach offers several advantages over the prior methods, including controllable glares on glasses and more temporally-consistent results for relighting videos.

Video

Dataset

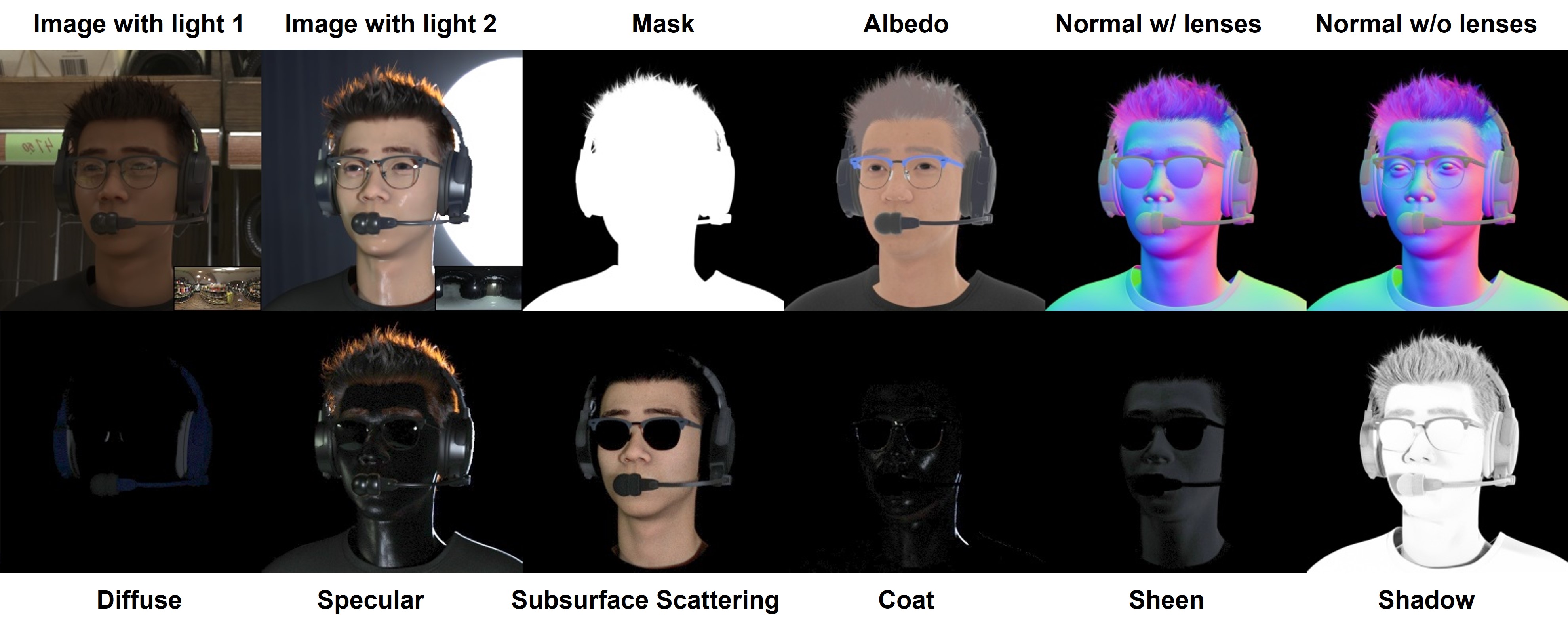

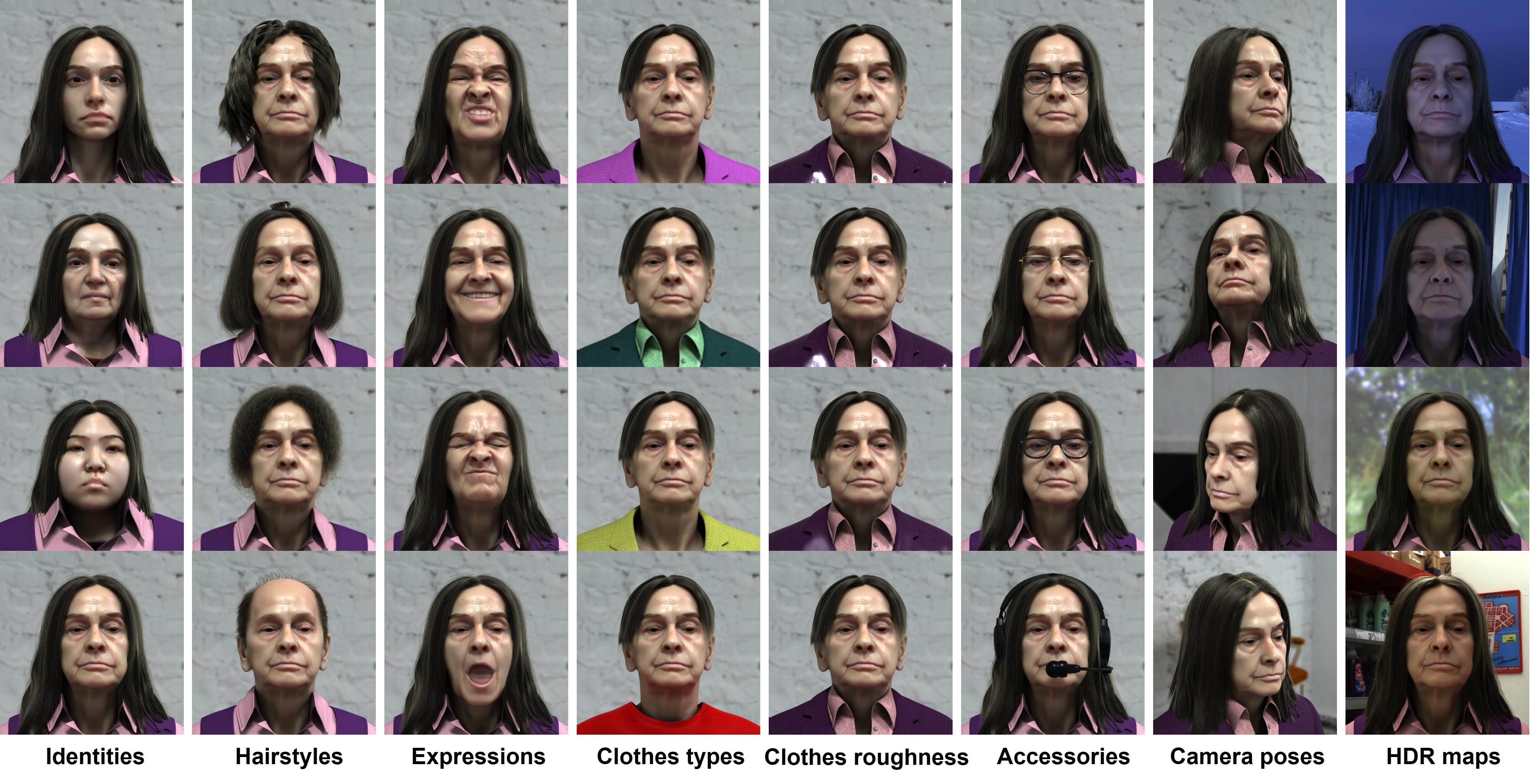

We present a physically-rendered synthetic dataset tailored for portrait relighting. The dataset consists of 300k samples, where each sample is rendered under two different HDR illuminations. Besides the RGB images, we also render a suite of other attributes, such as albedo and normal maps.

An example of the rendered images and AOVs in our dataset.

We vary the dataset using a wide range of identities, and each rendering is paired with a random hairstyle, clothing, and camera pose.

Variations of our dataset.

Results

Close-up Face Comparisons

We first compare with state-of-the-art portrait relighting methods on close-up face crops.

Comparisons with state-of-the-art methods on close crops of FFHQ.

High-Resolution Comparisons

Next, we show high-res comparisons with TR on larger face crops.

More comparisons with Total Relighting on large crops of FFHQ.

Eyeglass Glare Control

Our network also has the ability to add glares on eyeglasses. We show side-by-side comparisons for without and with adding glares by our method.

Input

Relit w/o glares

Relit w/ glares

Temporal Video Results

Finally, we also achieve more temporally-stable video relighting results using our temporal smoothing mechanism.

Citation

@inproceedings{yeh2022learning,

title={Learning to Relight Portrait Images via a Virtual Light Stage and Synthetic-to-Real Adaptation},

author={Yeh, Yu-Ying and Nagano, Koki and Khamis, Sameh and Kautz, Jan and Liu, Ming-Yu and Wang, Ting-Chun},

booktitle={ACM SIGGRAPH Asia},

year={2022}

}