Abstract

Recent progress in 3D object generation has greatly improved both the quality and efficiency. However, most existing methods generate a single mesh with all parts fused together, which limits the ability to edit or manipulate individual parts. A key challenge is that different objects may have a varying number of parts. To address this, we propose a new end-to-end framework for part-level 3D object generation. Given a single input image, our method generates high-quality 3D objects with an arbitrary number of complete and semantically meaningful parts. We introduce a dual volume packing strategy that organizes all parts into two complementary volumes, allowing for the creation of complete and interleaved parts that assemble into the final object. Experiments show that our model achieves better quality, diversity, and generalization than previous image-based part-level generation methods.

Interactive Results

Method

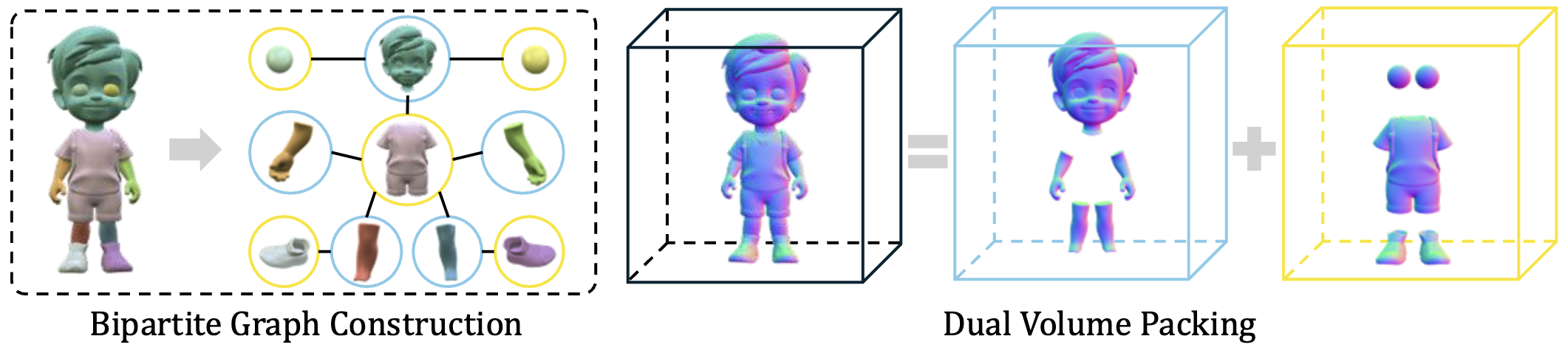

Dual Volume Packing

Instead of generating parts one-by-one, we propose to pack as many disjoint parts as possible into the same volume to maximize spatial efficiency and minimize computational cost.

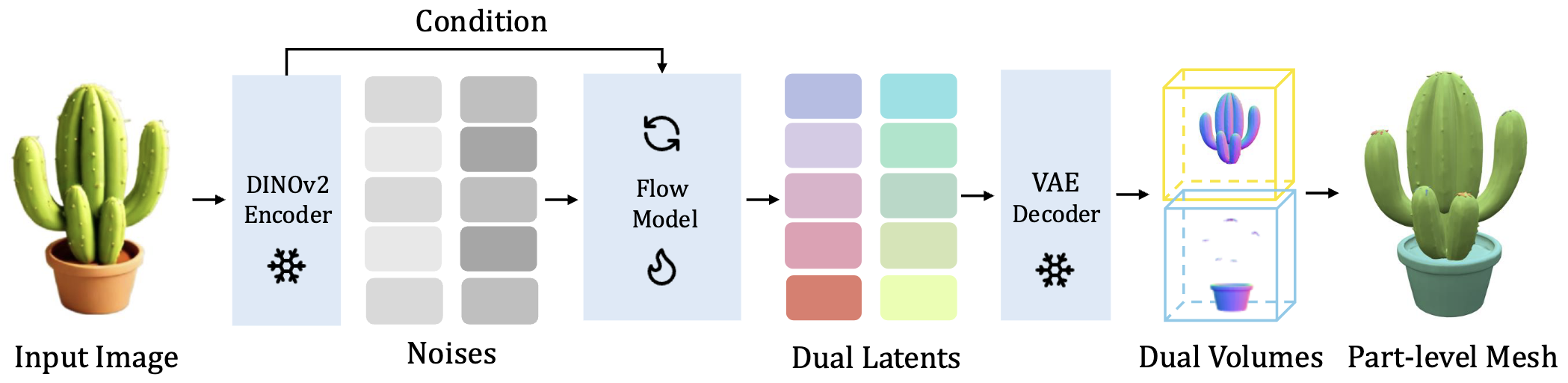

Network Architecture

Our network contains a VAE model and a rectified flow model to denoise the latent code conditioned on the input image. Differently, we generate dual latent codes instead of one at the same time.

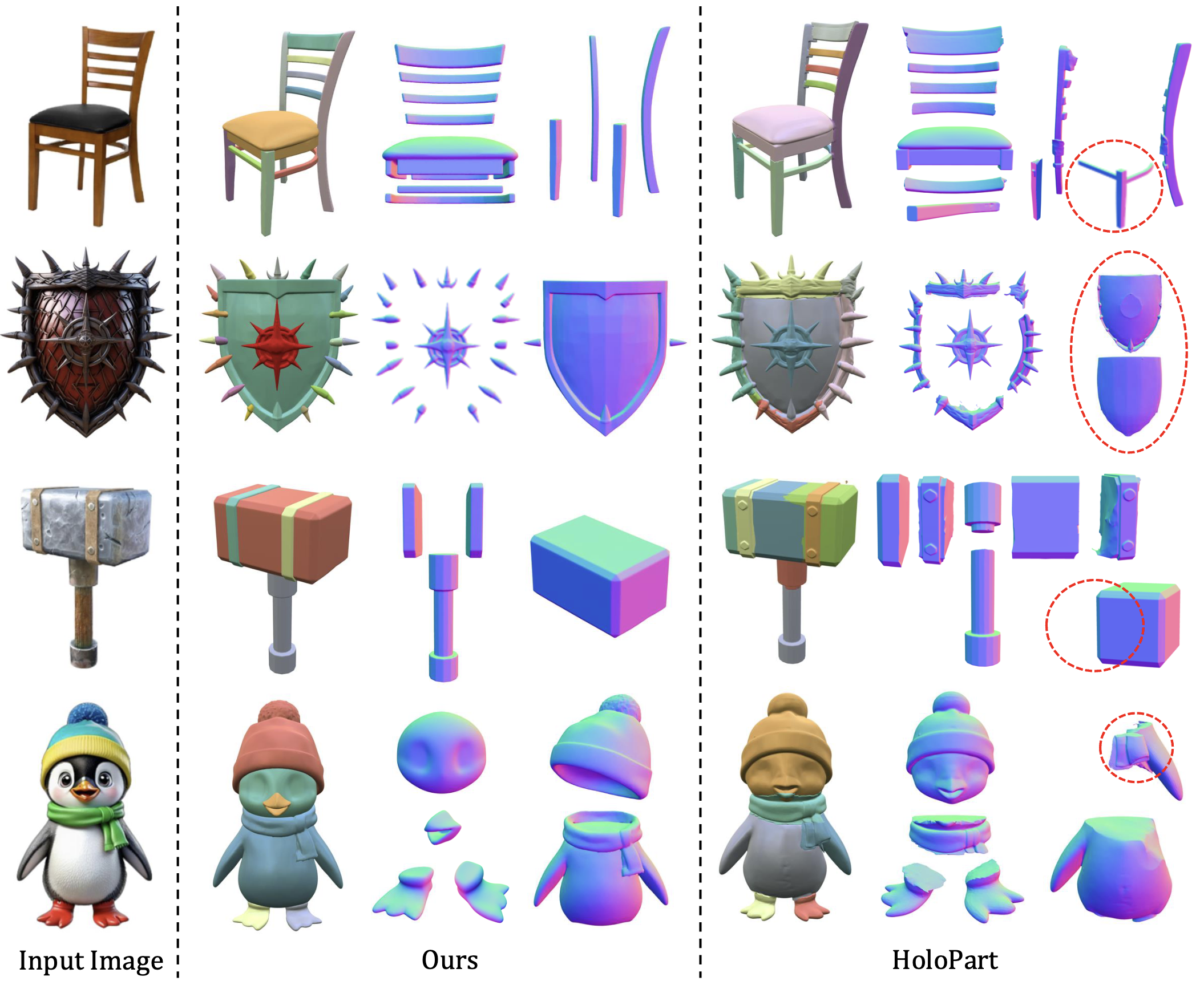

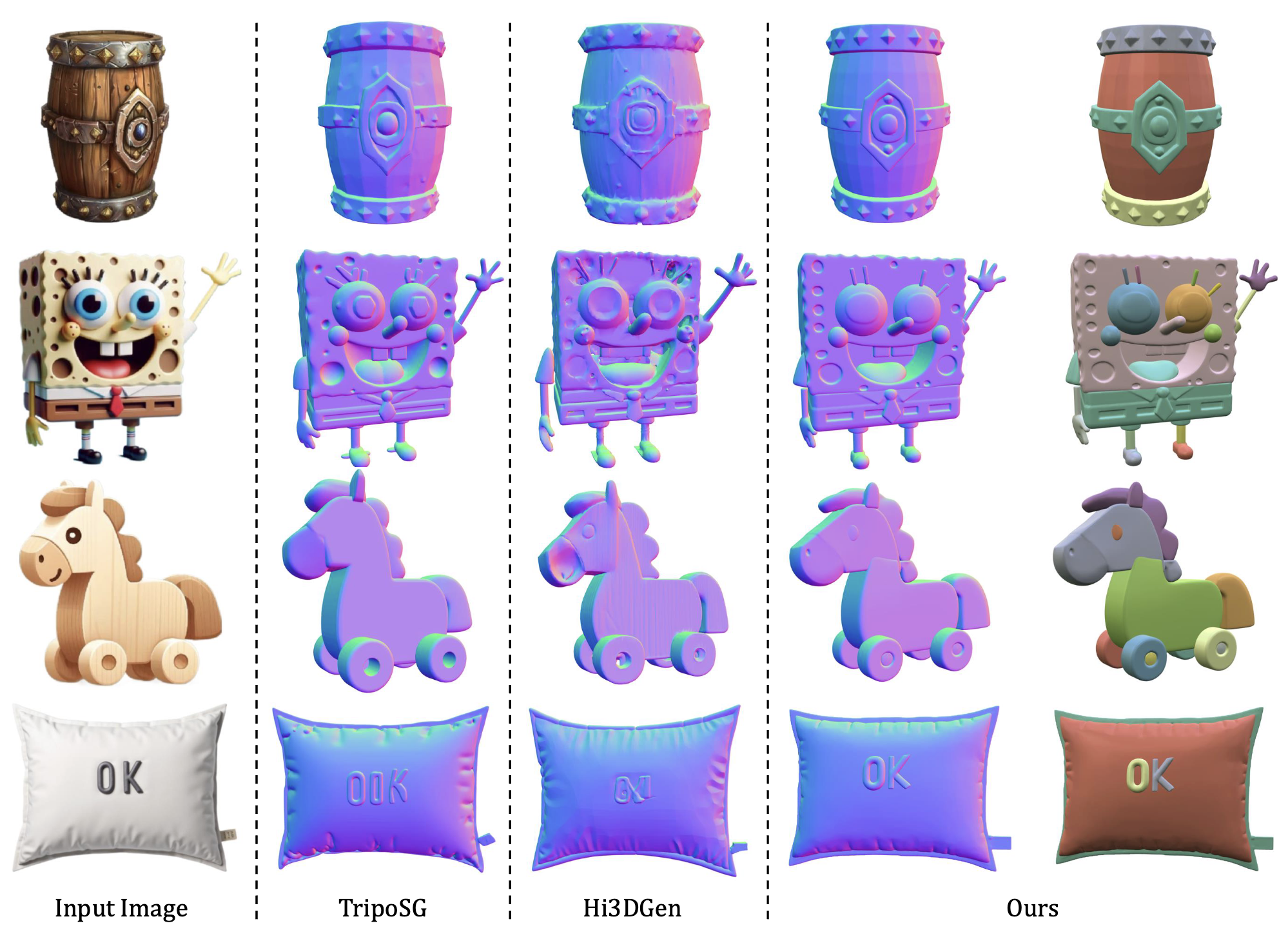

Comparisons

Our method generates more robust and semantically meaningful parts with faster speed.

Citation

@inproceedings{tang2025partpacker,

title={Efficient Part-level 3D Object Generation via Dual Volume Packing},

author={Tang, Jiaxiang and Lu, Ruijie and Li, Zhaoshuo and Hao, Zekun and Li, Xuan and Wei, Fangyin and Song, Shuran and Zeng, Gang and Liu, Ming-Yu and Lin, Tsung-Yi},

booktitle={Advances in Neural Information Processing Systems (NeurIPS)},

year={2025}

}