Diffusion models (DMs) have established themselves as the state-of-the-art generative modeling approach in the visual domain and beyond. A crucial drawback of DMs is their slow sampling speed, relying on many sequential function evaluations through large neural networks. Sampling from DMs can be seen as solving a differential equation through a discretized set of noise levels known as the sampling schedule. While past works primarily focused on deriving efficient solvers, little attention has been given to finding optimal sampling schedules, and the entire literature relies on hand-crafted heuristics. In this work, for the first time, we propose Align Your Steps, a general and principled approach to optimizing the sampling schedules of DMs for high-quality outputs. We leverage methods from stochastic calculus and find optimal schedules specific to different solvers, trained DMs and datasets. We evaluate our novel approach on several image, video as well as 2D toy data synthesis benchmarks, using a variety of different solvers, and observe that our optimized schedules outperform previous handcrafted schedules in almost all experiments. Our method demonstrates the untapped potential of sampling schedule optimization, especially in the few-step synthesis regime.

Our optimized schedules can be used at inference time in a plug-and-play fashion. Please see our quickstart guide to get started with using our schedules in diffusers and the colab notebook for example code with Stable Diffusion 1.5 and SDXL.

Diffusion models (DMs) have proven themselves to be extremely reliable probabilistic generative models that can produce high-quality data. They have been successfully applied to applications such as image synthesis, image super-resolution, image-to-image translation, image editing, inpainting, video synthesis, text-to-3d generation, and even planning. However, sampling from DMs corresponds to solving a generative Stochastic or Ordinary Differential Equation (SDE/ODE) in reverse time and requires multiple sequential forward passes through a large neural network, limiting their real-time applicability.

Solving SDE/ODEs within the interval \([t_{min}, t_{max}]\) works by discretizing it into \(n\) smaller sub-intervals \(t_{min} = t_0 < t_1 < \dots < t_{n}=t_{max}\) called a sampling schedule, and numerically solving the differential equation between consecutive \(t_i\) values. Currently, most prior works adopt one of a handful of heuristic schedules, such as simple polynomials and cosine functions, and little effort has gone into optimizing this schedule. We attempt to fill this gap by introducing a principled approach for optimizing the schedule in a dataset and model specific manner, resulting in improved outputs given the same compute budget.

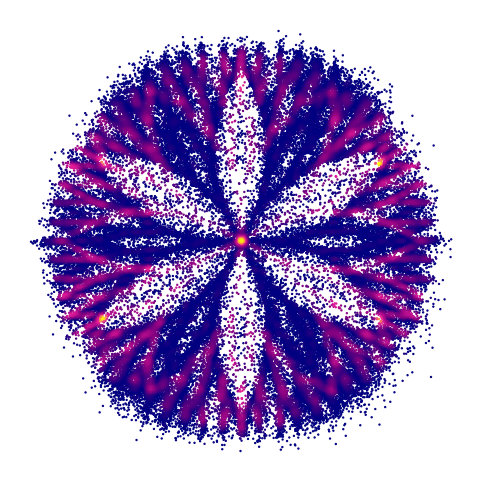

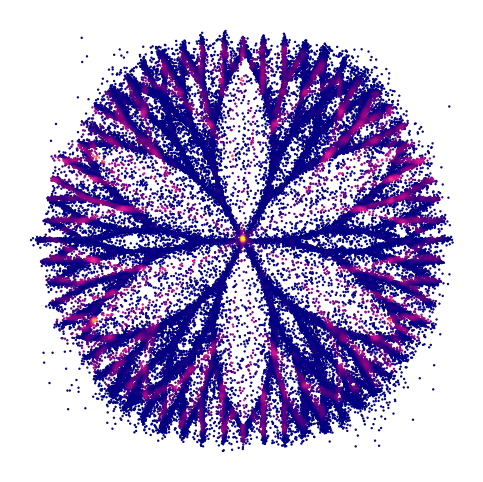

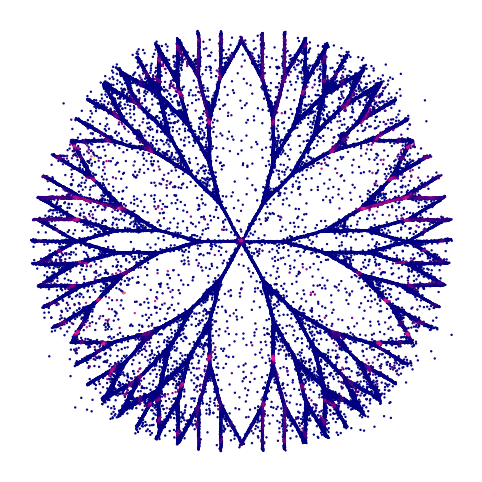

Assuming that \( P_{true} \) represents the distribution of running the reverse-time SDE (defined by the learnt model) exactly, and \( P_{disc} \) represents the distribution of solving it with Stochastic-DDIM and a sampling schedule, using the Girsanov theorem an upper bound can be derived for the Kullback-Leibler divergence between these two distributions (simplified; see paper for details) \[ D_{KL}(P_{true} || P_{disc}) \leq \underbrace{ \sum_{i=1}^{n} \int_{t_{i-1}}^{t_{i}} \frac{1}{t^3} \mathbb{E}_{x_t \sim p_t, x_{t_i} \sim p_{t_i | t}} || D_{\theta}(x_t, t) - D_{\theta}(x_{t_i}, t_i) ||_2^2 \ dt }_{= KLUB(t_0, t_1, \dots, t_n)} + constant \] A similar Kullback-Leibler Upper Bound (KLUB) can be found for other stochastic SDE solvers. Given this, we formulate the problem of optimizing the sampling schedule as minimizing the KLUB term with respect to its time discretization, i.e. the sampling scheduling. Monte-Carlo integration with importance sampling is used to estimate the expectation values and the schedule is optimized iteratively. We showcase the benefits of optimizing schedules on a 2D toy distribution (see visualization below).

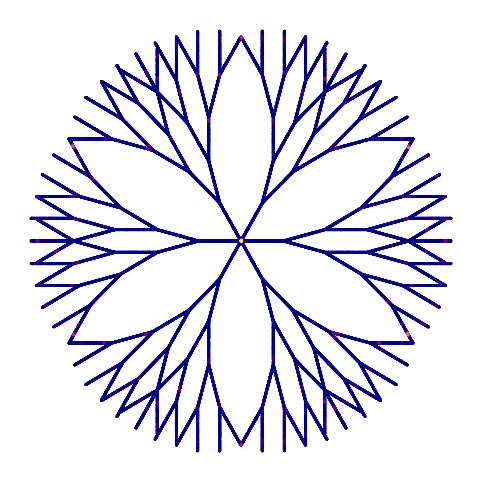

Modeling a 2D toy distribution: Samples in (b), (c), and (d) are generated using 8 steps of SDE-DPM-Solver++(2M) with EDM, LogSNR, and AYS schedules, respectively. Each image consists of 100,000 sampled points.

To evaluate the usefulness of optimized schedules, we performed rigorous quantitative experiments on standard image generation benchmarks (CIFAR10, FFHQ, ImageNet), and found that these schedules result in consistent improvements across the board in image quality (measured by FID) for a large variety of popular samplers. We also performed a user study for text-to-image models (specifically Stable Diffusion 1.5), and found that on average images generated with these schedules are preferred twice as much. Please see the paper for these results and evaluations.

Below, we showcase some text-to-image examples that illustrate how using an optimized schedule can generate images with more visual details and better text-alignment given the same number of forward evaluations (NFEs). We provide side-by-side comparisons between our optimized schedules against two of the most popular schedules used in practice (EDM and Time-Uniform). All images are generated with a stochastic (casino) or deterministic (lock) version of DPM-Solver++(2M) with 10 steps. Hover over the images for zoom-ins.

casino

Text prompt:

"1girl, blue dress, blue hair, ponytail, studying at the library, focused"

Model: Dreamshaper 8

casino Text prompt: "An enchanting forest path with sunlight filtering through the dense canopy, highlighting the vibrant greens and the soft, mossy floor"

casino Text prompt: "A digital Illustration of the Babel tower, 4k, detailed, trending in artstation, fantasy vivid colors"

casino Text prompt: "A glass-blown vase with a complex swirl of colors, illuminated by sunlight, casting a mosaic of shadows on a white table"

casino Text prompt: "A delicate glass pendant holding a single, luminous firefly, its glow casting warm, dancing shadows on the wearer's neck"

casino Text prompt: "A wise old owl wearing a velvet smoking jacket and spectacles, with a pipe in its beak, seated in a vintage leather armchair"

casino

Text prompt:

"A close-up portrait of a baby wearing a tiny spider-man costume, trending on artstation"

Model: Dreamshaper 8

casino

Text prompt:

"1girl, blue dress, blue hair, ponytail, studying at the library, focused"

Model: Dreamshaper 8

casino Text prompt: "Capybara podcast neon sign"

casino Text prompt: "Long-exposure night photography of a starry sky over a mountain range, with light trails"

casino Text prompt: "A tranquil village nestled in a lush valley, with small, cozy houses dotting the landscape, surrounded by towering, snow-capped mountains under a clear blue sky. A gentle river meanders through the village, reflecting the warm glow of the sunrise"

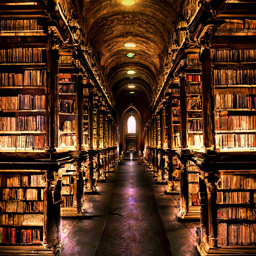

casino Text prompt: "An ancient library buried beneath the earth, its halls lit by glowing crystals, with scrolls and tomes stacked in endless rows"

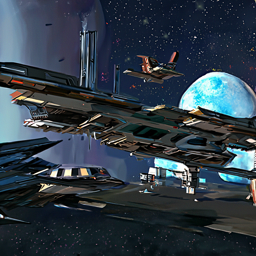

casino Text prompt: "A bustling spaceport on a distant planet, with ships of various designs taking off against a backdrop of twin moons"

casino Text prompt: "A set of ancient armor, standing as if worn by an invisible warrior, in front of a backdrop of medieval banners and weaponry."

casino Text prompt: "An elephant painting a colorful abstract masterpiece with its trunk, in a studio surrounded by amused onlookers."

casino Text prompt: "Tiger in construction gear, perched on aged wooden docks, formidable, curious, tiger on the waterfront, textured, vibrant, atmospheric, sharp focus, lifelike, professional lighting, cinematic, 8K"

casino Text prompt: "Cluttered house in the woods, anime, oil painting, high resolution, cottagecore, ghibli inspired, 4k"

casino Text prompt: "An old, creepy dollhouse in a dusty attic, with dolls posed in unsettling positions. Cobwebs, dim lighting, and the shadows of unseen presences create a chilling scene"

lock Text prompt: "A stunning, intricately detailed painting of a sunset in a forest valley, blending the rich, symmetrical styles of Dan Mumford and Marc Simonetti with astrophotography elements"

lock Text prompt: "Create a photorealistic scene of a powerful storm with swirling, dark clouds and fierce winds approaching a coastal village. Show villagers preparing for the storm, with detailed architecture reflecting a fantasy world"

lock Text prompt: "Cyberpunk cityscape with towering skyscrapers, neon signs, and flying cars"

We also studied the effect of optimized schedules in video generation using the open-source image-to-video model Stable Video Diffusion. We find that using optimized schedules leads to more stable videos with less color distortions as the video progresses. Below we show side-by-side comparisons of videos generated with 10 DDIM steps using the two different schedules.

@misc{sabour2024align,

title={Align Your Steps: Optimizing Sampling Schedules in Diffusion Models},

author={Amirmojtaba Sabour and Sanja Fidler and Karsten Kreis},

year={2024},

eprint={2404.14507},

archivePrefix={arXiv},

primaryClass={cs.CV}

}