Denoising diffusion models (DDMs) have shown promising results in 3D point cloud synthesis. To advance 3D DDMs and make them useful for digital artists, we require (i) high generation quality, (ii) flexibility for manipulation and applications such as conditional synthesis and shape interpolation, and (iii) the ability to output smooth surfaces or meshes. To this end, we introduce the hierarchical Latent Point Diffusion Model (LION) for 3D shape generation. LION is set up as a variational autoencoder (VAE) with a hierarchical latent space that combines a global shape latent representation with a point-structured latent space. For generation, we train two hierarchical DDMs in these latent spaces. The hierarchical VAE approach boosts performance compared to DDMs that operate on point clouds directly, while the point-structured latents are still ideally suited for DDM-based modeling. Experimentally, LION achieves state-of-the-art generation performance on multiple ShapeNet benchmarks. Furthermore, our VAE framework allows us to easily use LION for different relevant tasks: LION excels at multimodal shape denoising and voxel-conditioned synthesis, and it can be adapted for text- and image-driven 3D generation. We also demonstrate shape autoencoding and latent shape interpolation, and we augment LION with modern surface reconstruction techniques to generate smooth 3D meshes. We hope that LION provides a powerful tool for artists working with 3D shapes due to its high-quality generation, flexibility, and surface reconstruction.

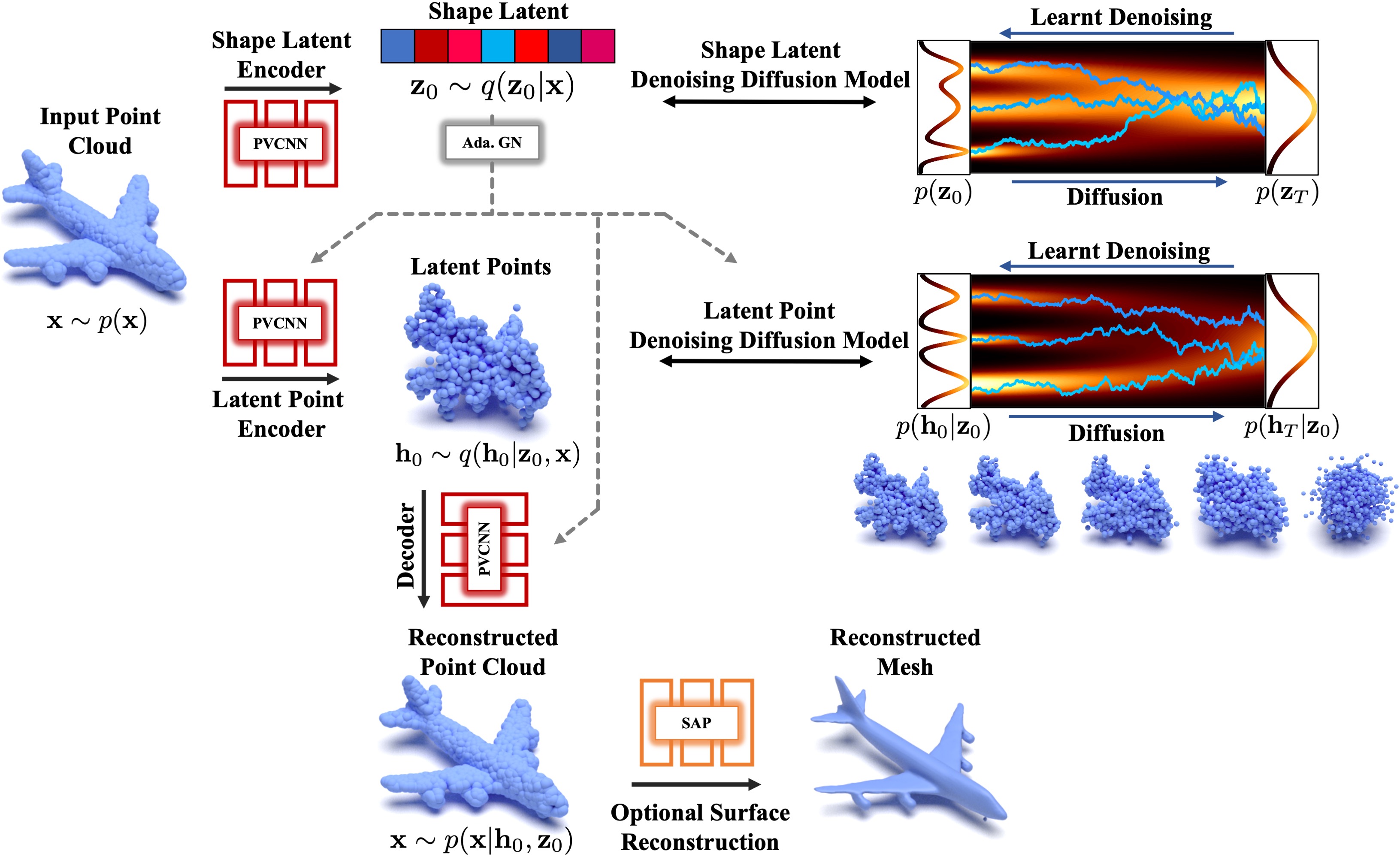

LION is set up as a hierarchical point cloud VAE with denoising diffusion models over the shape latent and latent point distributions.

Point-Voxel CNNs (PVCNN) with adaptive Group Normalization (Ada. GN) are used as neural networks.

The latent points can be interpreted as a smoothed version of the input point cloud.

Shape As Points (SAP) is optionally used for mesh reconstruction.

We introduce the Latent Point Diffusion Model (LION), a DDM for 3D shape generation. LION focuses on learning a 3D generative model directly from geometry data without image-based training. Similar to previous 3D DDMs in this setting, LION operates on point clouds. However, it is constructed as a VAE with DDMs in latent space. LION comprises a hierarchical latent space with a vector-valued global shape latent and another point-structured latent space. The latent representations are predicted with point cloud processing encoders, and two latent DDMs are trained in these latent spaces. Synthesis in LION proceeds by drawing novel latent samples from the hierarchical latent DDMs and decoding back to the original point cloud space. Importantly, we also demonstrate how to augment LION with modern surface reconstruction methods to synthesize smooth shapes as desired by artists. LION has multiple advantages:

Expressivity: By mapping point clouds into regularized latent spaces, the DDMs in latent space are effectively tasked with learning a smoothed distribution. This is easier than training on potentially complex point clouds directly, thereby improving expressivity. However, point clouds are, in principle, an ideal representation for DDMs. Because of that, we use latent points, this is, we keep a point cloud structure for our main latent representation. Augmenting the model with an additional global shape latent variable in a hierarchical manner further boosts expressivity.

Varying Output Types: Extending LION with Shape As Points (SAP) geometry reconstruction allows us to also output smooth meshes. Fine-tuning SAP on data generated by LION's autoencoder reduces synthesis noise and enables us to generate high-quality geometry. LION combines (latent) point cloud-based modeling, ideal for DDMs, with surface reconstruction, desired by artists.

Flexibility: Since LION is set up as a VAE, it can be easily adapted for different tasks without retraining the latent DDMs: We can efficiently fine-tune LION's encoders on voxelized or noisy inputs, which a user can provide for guidance. This enables multimodal voxel-guided synthesis and shape denoising. We also leverage LION's latent spaces for shape interpolation and autoencoding. Optionally training the DDMs conditioned on CLIP embeddings enables image- and text-driven 3D generation.

Generated point clouds and reconstructed meshes of airplanes.

Generated point clouds and reconstructed meshes of chairs.

Generated point clouds and reconstructed meshes of cars.

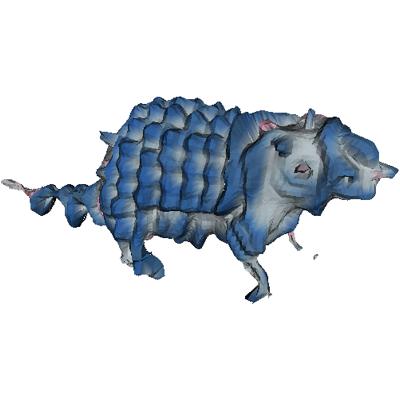

Generated point clouds and reconstructed meshes of animals (model trained on only 553 shapes).

Generated point clouds and reconstructed meshes of bottles (model trained on only 340 shapes).

Generated point clouds and reconstructed meshes of mugs (model trained on only 149 shapes).

Below we show samples from a LION model that was trained on shapes from multiple ShapeNet catgories,

Generated point clouds and reconstructed meshes. The LION model is trained on 13 ShapeNet categories jointly without conditioning.

The hierarchical VAE architecture of LION is crucial for scalability and capturing diverse shape data. It allows the model to learn a multimodal distribution over different categories without the need for class-conditioning. We find that the shape latent variables capture global shape, while the latent points model details. We validate this by fixing the shape variable to different values and only sampling different latent points.

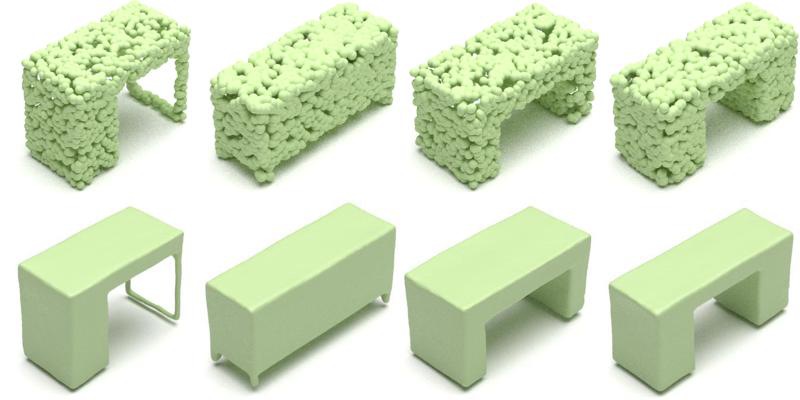

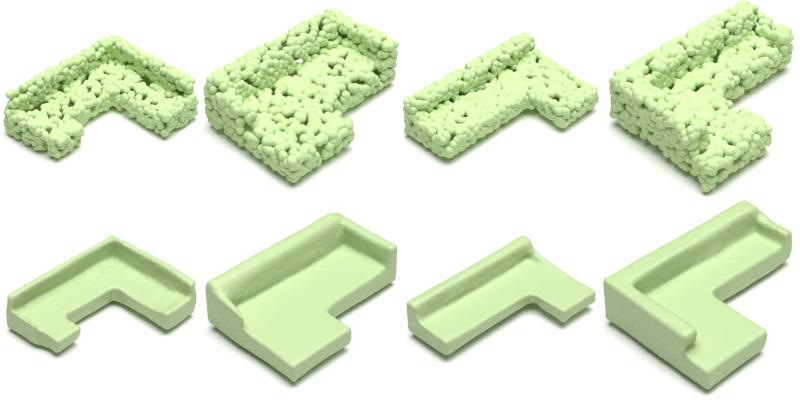

For each group of shapes, we fix the shape latent variables and sample different latent points to generate the different shapes. We use the model that was trained on 13 ShapeNet categories.

Below we show samples from a LION model that was trained on all 55 ShapeNet categories

Generated point clouds from a LION model that was trained on all 55 ShapeNet categories jointly without conditioning.

LION can interpolate shapes by traversing the latent space (interpolation is performed in the latent diffusion models' prior space, using the Probability Flow ODE for deterministic DDM-generation). The generated shapes are clean and semantically plausible along the entire interpolation path.

Leftmost shape: the source shape. Rightmost shape: the target shape. The shapes in the middle are interpolated between source and target shape.

LION traverses the latent space and interpolates many different shapes.

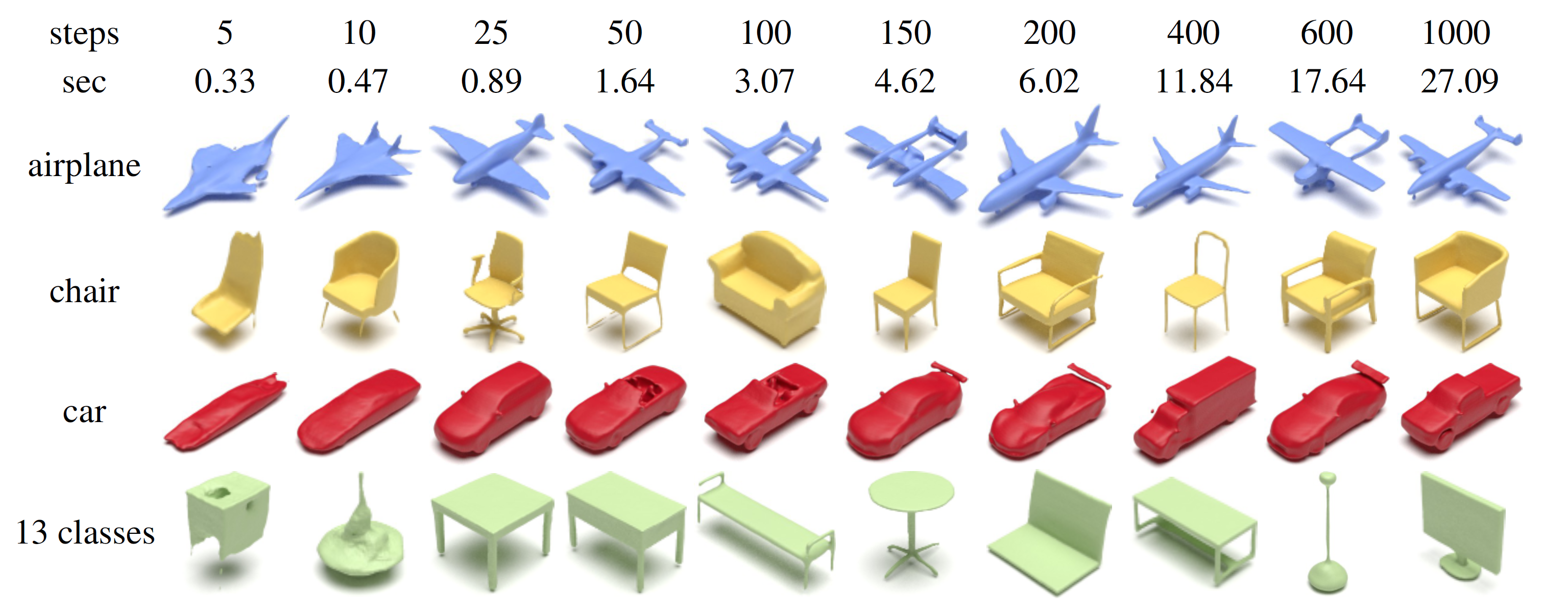

LION's sampling time can be reduced by using fast DDM sampler, such as the DDIM sampler. DDIM sampling with 25 steps can already generate high-quality shapes, which takes less than 1 second. This enables real-time and interactive applications.

DDIM samples from LION trained on different data. The top two rows show the number of steps and the wall-clock time required when drawing one sample. With DDIM sampling, we can reduce the sampling time from 27.09 seconds (1000 steps) to less than 1 second (25 steps) to generate an object.

LION can synthesize different variations of a given shape, enabling multimodal generation in a controlled manner. This is achieved through a diffuse-denoise procedure, where shapes are diffused for only a few steps in the latent DDMs and then denoised again.

Multimodal generation of airplanes.

Multimodal generation of chairs.

Multimodal generation of cars.

Given a coarse voxel grid, LION can generate different plausible detailed shapes. In practice, an artist using a 3D generative model may have a rough idea of the desired shape. For instance, they may be able to quickly construct a coarse voxelized shape, to which the generative model then adds realistic details. We achieve this by fine-tuning our encoder networks on voxelized shapes, and performing a few steps of diffuse-denoise in latent space to generate various plausible detailed shapes.

Left: Input voxel grid. Right: two point clouds generated by LION and the reconstructed mesh.

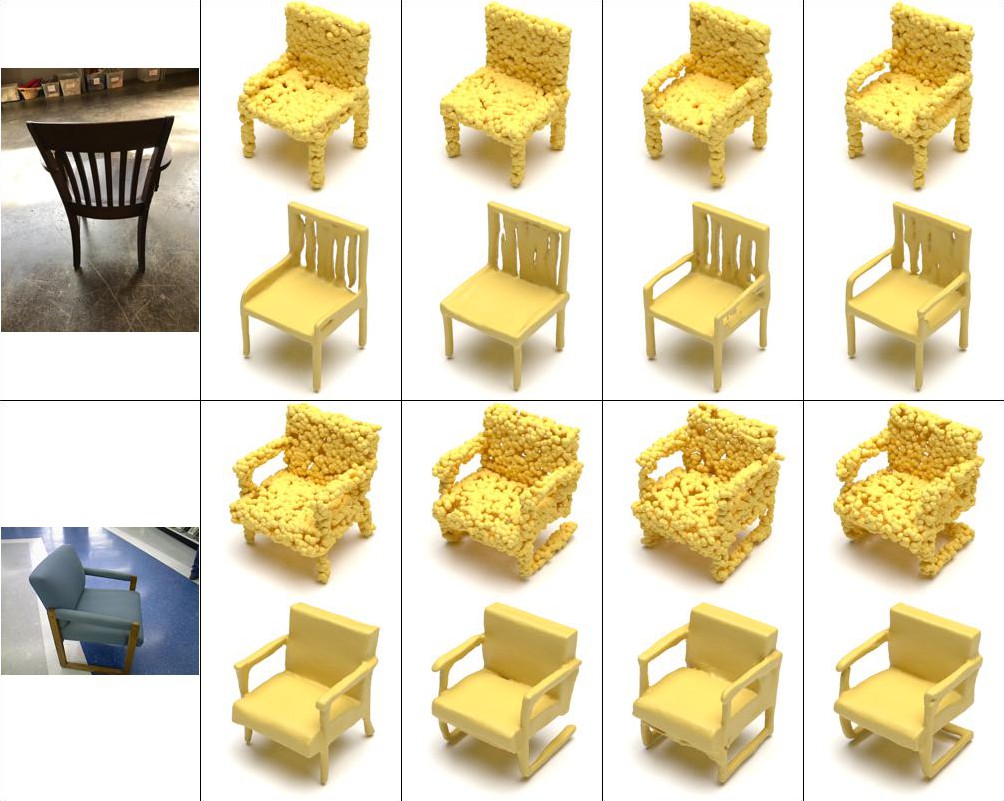

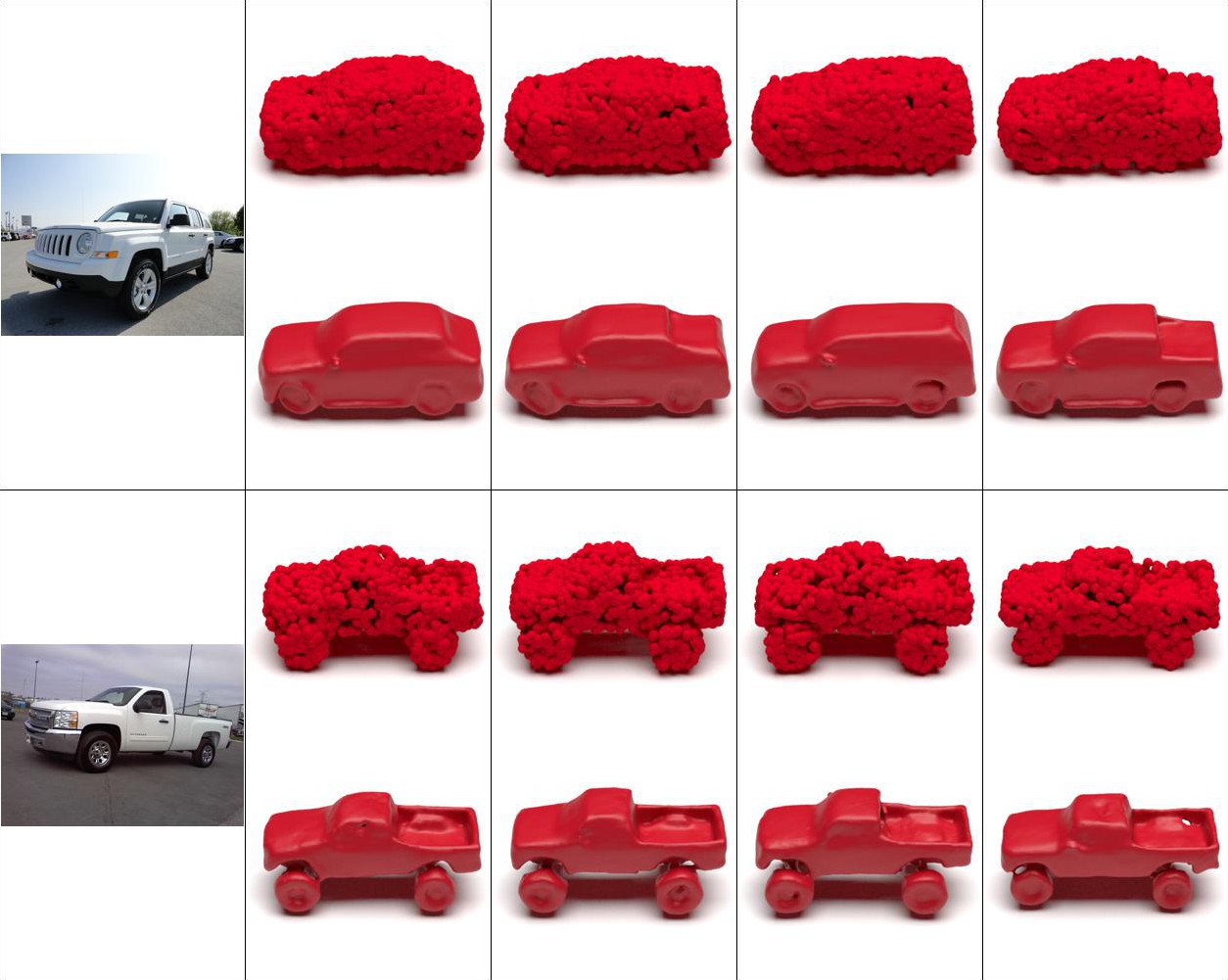

We qualitatively demonstrate how LION can be extended to also allow for single view reconstruction (SVR) from RGB data, using the approach from CLIP-Forge. We render 2D images from the 3D ShapeNet shapes, extract the images' CLIP image embeddings, and train LION's latent diffusion models while conditioning on the shapes' CLIP image embeddings. At test time, we then take a single view 2D image, extract the CLIP image embedding, and generate corresponding 3D shapes, thereby effectively performing SVR. We show SVR results from real RGB data.

Single view reconstruction from RGB images of chairs. For each input image, LION can generate multi-modal outputs.

More single view reconstructions from RGB images of cars.

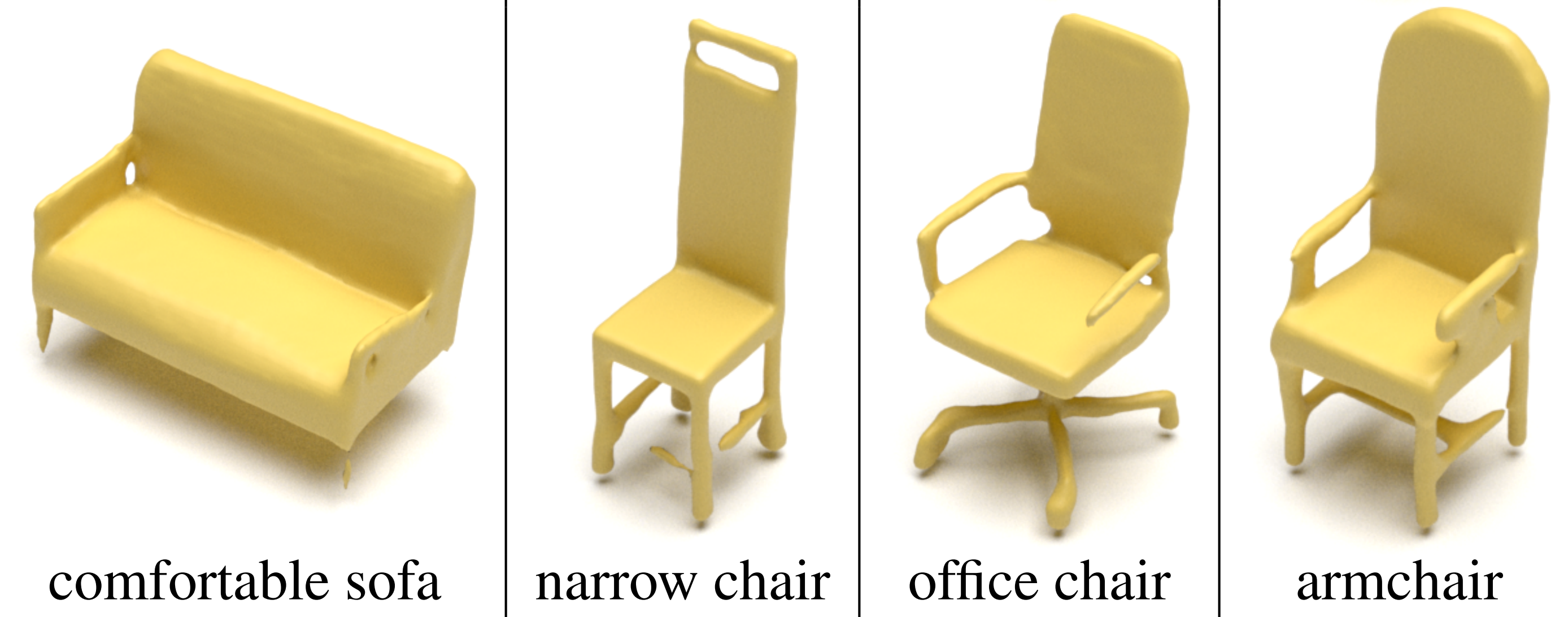

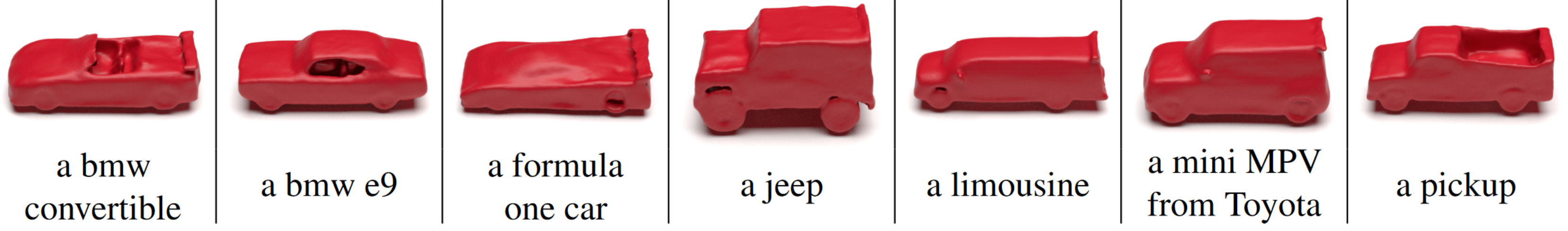

Using CLIP's text encoder, our method additionally allows for text-guided generation.

Text-driven shape generation of chairs with LION. Bottom row is the text input.

We apply Text2Mesh on some generated meshes from LION to additionally synthesize textures in a text-driven manner, leveraging CLIP. The original input meshes are generated by LION. This is only possible because LION can output practically useful meshes with its SAP-based surface reconstruction component (even though the backbone generative modeling component is point cloud-based).

LION: Latent Point Diffusion Models for 3D Shape Generation

Xiaohui Zeng, Arash Vahdat, Francis Williams, Zan Gojcic, Or Litany, Sanja Fidler, Karsten Kreis

Advances in Neural Information Processing Systems (NeurIPS), 2022

@inproceedings{zeng2022lion,

title={LION: Latent Point Diffusion Models for 3D Shape Generation},

author={Xiaohui Zeng and Arash Vahdat and Francis Williams and Zan Gojcic and Or Litany and Sanja Fidler and Karsten Kreis},

booktitle={Advances in Neural Information Processing Systems (NeurIPS)},

year={2022}

}