We introduce the NVFAIR dataset as a part of our work on avatar fingerprinting for authorized use of synthetic talking-head videos (webpage). Traditionally, detecting real vs. synthetic videos has been a popular task in the video forensics research community. With the prevalence of synthetically-generated content, we posit that detecting authorized use of synthetic talking-head videos is becoming an increasingly pertinent problem. To be able to train and test models that detect their authorized use, we desire a dataset that contains: 1) mutliple videos from a diverse set of subjects capturing them in various situations (posed, free-form, with and without prescribed expressions), 2) a subset of data where all subjects speak the same content, and 3) synthetically-generated face-reenactment videos featuring both self-reenactments (where subjects drive an image of their own face) and cross re-enactments (where several subjects drive a given target identity). Existing datasets, which have mainly been designed to cater to deepfake detection, do not capture all the desired properties. We propose the NVIDIA FAcIal Reenactment dataset that fills this void: it is the largest collection of facial reenactment videos till date, features both self- and cross-reenactments (exchaustively sampled) across all 161 unique subjects in the dataset, and contains a diverse set of subjects captured in a variety of settings. More details below.

Links

Release Updates

- The NVFAIR dataset version 1 (NVFAIR-v1) is now available for access via the data request form.

Data Capture Details

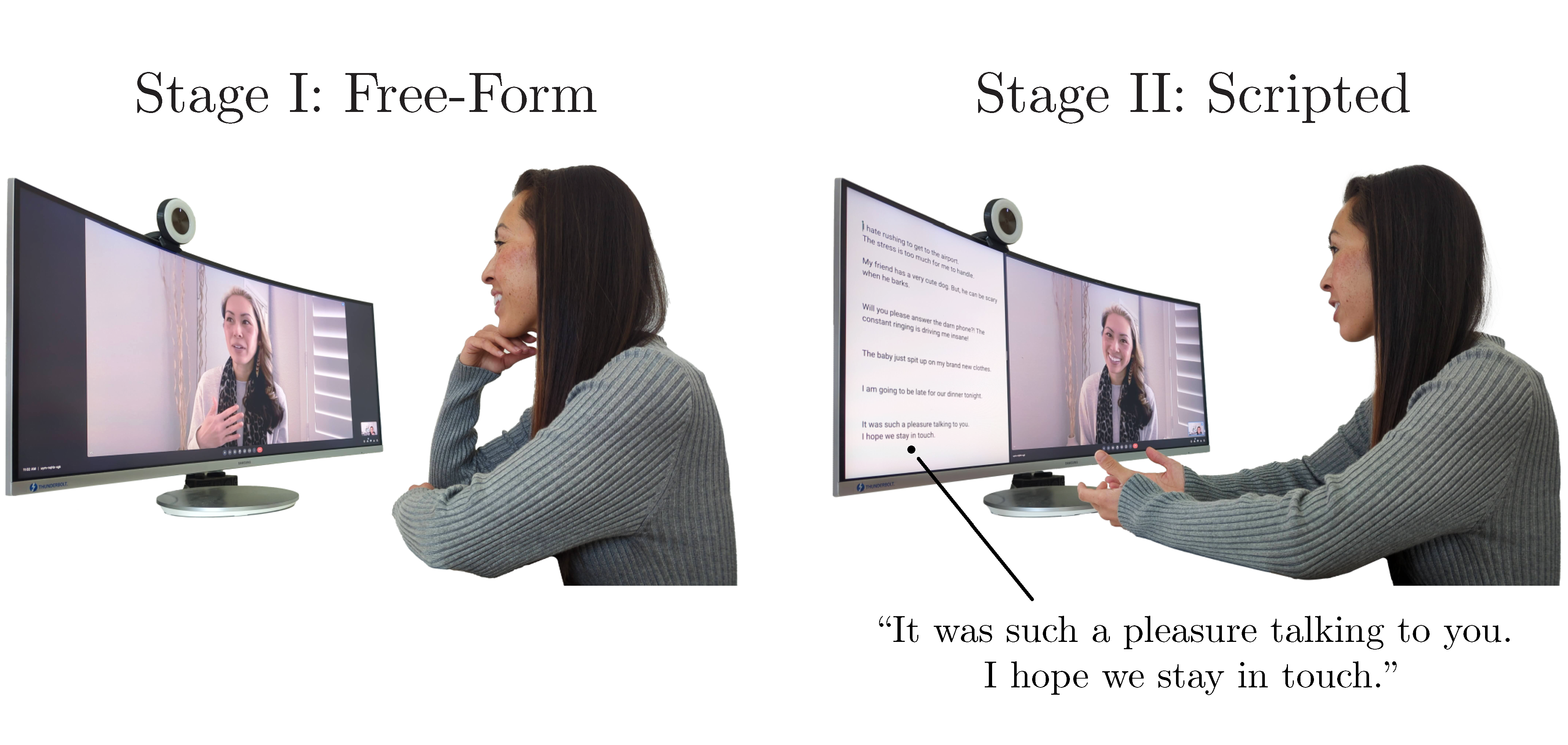

We begin the data capture process by asking 46 subjects to engage in recorded video calls in pairs. Relevant per-subject data is clipped out of these calls and made available as standalone files. This forms the original/real component of our dataset (Figure 1). We ask subjects to interact over recorded video calls, instead of asking them to simply record themselves, since video calls allow for a more natural interaction. Each video call proceeds in two stages. In the first stage, subjects speak on 7 common everyday topics in a free-form monologue when prompted by their recording partner (and the speaker and prompter switch roles for each topic). Next, subjects memorize and recite a predetermined set of scripted monologues to their partners in a manner most natural to them. In Figure 2, we show a subset of the real data.

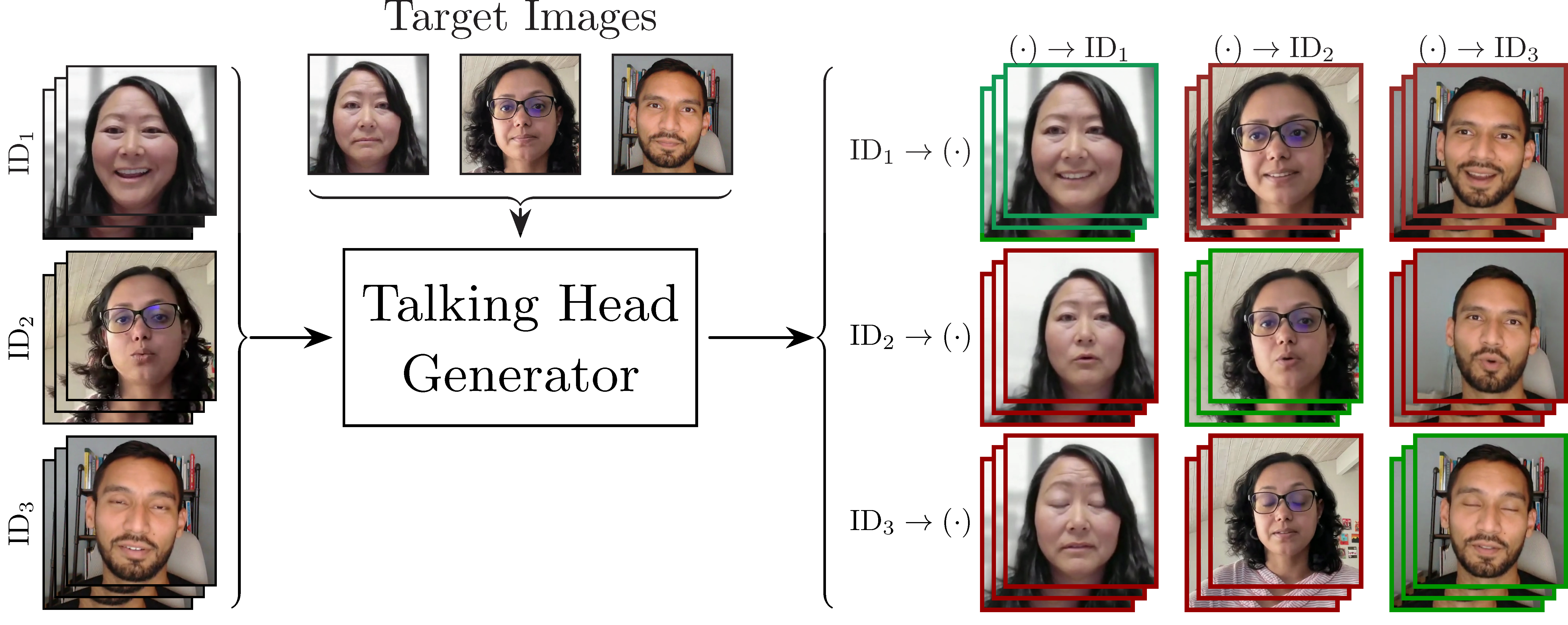

Our dataset also contains a synthetic component (Figure 3): talking-head videos generated using three facial reenactment methods: 1) face-vid2vid, 2) LIA(currently released for test-set only), and 3) TPS (currently released for test-set only). To generate these synthetic videos, we pool the data of 46 subjects from our video call-based data capture with those from the RAVDESS and CREMA-D datasets, leading to a total of 161 unique identities in the synthetic part of our dataset. For each synthetic talking-head video, a neutral facial image of an identity is driven by expressions from their own videos -- termed ''self-reenactment'' -- and the videos of all other identities -- termed ''cross-reenactment''. In a self-reenactment, the identity shown in the synthetic video (''target'' identity) matches the identity driving the video (''driving'' identity). In contrast, the target and driving identities are not the same in a cross-reenactment -- a case which could potentially indicate unauthorized use of the target identity. By recording scripted and free-form monologues for the original data of the 46 subjects, and providing synthetically-generated self and cross-reenactments for all identities (including those from RAVDESS and CREMA-D), we combine many unique properties in our dataset. This makes our dataset well-suited for training and evaluating avatar fingerprinting models and it can also be beneficial for other related tasks such as detection of synthetically-generated content.

Additional Considerations

We acknowledge the societal importance of introducing guardrails when it comes to the use of talking-head generation technology. We present this dataset as a step towards trustworthy use of such technologies. Nevertheless, our work could be misconstrued as having solved the problem and inadvertently accelerate the unhindered adoption of talking head technology. We do not advocate for this. Instead we emphasize that this is only the first work on this topic and underscore the importance of further research in this area. Since our dataset contains human subjects' facial data, we have taken many steps to ensure proper use and governance: IRB approval, informed consent prior to data capture, removing subject identity information, pre-specifying the subject matter that can be discussed in the videos, allowing subjects the freedom to revoke our access to their provided data at any point in future (and stipulating that interested third parties maintain current contact information with us so we can convey these changes to them).

Acknowledgments

We would like to thank the participants for contributing their facial audio-visual recordings for our dataset, and Desiree Luong, Woody Luong, and Josh Holland for their help with Figure 1. We thank David Taubenheim for the voiceover in the demo video for our work, and Abhishek Badki for his help with the training infrastructure. We acknowledge Joohwan Kim, Rachel Brown, Anjul Patney, Ruth Rosenholtz, Ben Boudaoud, Josef Spjut, Saori Kaji, Nikki Pope, Daniel Rohrer, Rochelle Pereira, Rajat Vikram Singh, Candice Gerstner, Alex Qi, and Kai Pong for discussions and feedback on the security aspects, data capture and generation steps, informed consent form, photo release form, and agreements for data governance and third-party data sharing. We base this website off of the StyleGAN3 website template. Koki Nagano, Ekta Prashnani, and David Luebke were partially supported by DARPA’s Semantic Forensics (SemaFor) contract (HR0011-20-3-0005). This research was funded, in part, by DARPA’s Semantic Forensics (SemaFor) contract HR0011-20-3-0005. The views and conclusions contained in this document are those of the authors and should not be interpreted as representing the official policies, either expressed or implied, of the U.S. Government. Distribution Statement “A” (Approved for Public Release, Distribution Unlimited).

Citing the NVFAIR dataset

@article{prashnani2023avatar,

title={Avatar Fingerprinting for Authorized Use of Synthetic Talking-Head Videos},

author={Ekta Prashnani and Koki Nagano and Shalini De Mello and David Luebke and Orazio Gallo},

journal={arXiv preprint arXiv:2305.03713},

year={2023}

}