Lyra 2.0:

Explorable Generative 3D Worlds

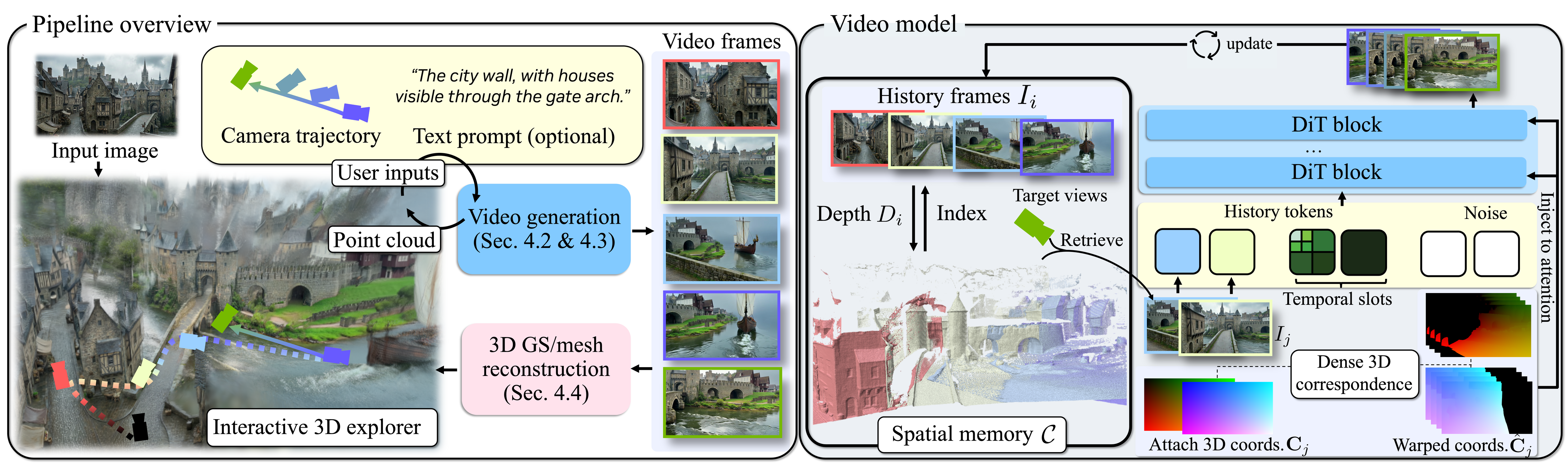

TL;DR: We generate camera-controlled walkthrough videos and lift them to 3D via feed-forward reconstruction. To enable long-horizon, 3D-consistent generation, we address spatial forgetting with per-frame geometry for information routing and temporal drifting with self-augmented training that teaches the model to correct drift.

News

🚀 [April 15, 2026] Paper, model weights, and inference code are now publicly available!

🔒 [May 8, 2026] Page temporarily anonymized. Please Google for code, model, and citation.

Gallery

Creating a simulation-ready 3D environment with interactive GUI

We build an interactive GUI to visualize the accumulated point clouds, and enable users to plan camera trajectories to revisit previously explored regions or venture into unobserved areas. Lyra 2.0 progressively generates the scene as the user moves in the scene.

The generated video can be further lifted into 3DGS and meshes, which can be directly exported to physics engines for downstream applications. We provide examples of exporting the scene into Isaac Sim for physically grounded robot navigation and interaction, highlighting the potential for scalable embodied AI simulation.

Scene Exploration

Hover to play.

Diverse Scene Generation

Hover to play;

Click Show GS to switch from direct generated video to rendered video from generated Gaussians Splats .

Abstract

Recent advances in video generation enable a new paradigm for 3D scene creation: generating camera-controlled videos that simulate scene walkthroughs, then lifting them to 3D via feed-forward reconstruction techniques. This generative reconstruction approach combines the visual fidelity and creative capacity of video models with 3D outputs ready for real-time rendering and simulation.

Scaling to large, complex environments requires 3D-consistent video generation over long camera trajectories with large viewpoint changes and location revisits, a setting where current video models degrade quickly. Existing methods for long-horizon generation are fundamentally limited by two forms of degradation: spatial forgetting and temporal drifting. As exploration proceeds, previously observed regions fall outside the model's temporal context, forcing the model to hallucinate structures when revisited. Meanwhile, autoregressive generation accumulates small synthesis errors over time, gradually distorting scene appearance and geometry.

We present Lyra 2.0, a framework for generating persistent, explorable 3D worlds at scale. To address spatial forgetting, we maintain per-frame 3D geometry and use it solely for information routing—retrieving relevant past frames and establishing dense correspondences with the target viewpoints—while relying on the generative prior for appearance synthesis. To address temporal drifting, we train with self-augmented histories that expose the model to its own degraded outputs, teaching it to correct drift rather than propagate it. Together, these enable substantially longer and 3D-consistent video trajectories, which we leverage to fine-tune feed-forward reconstruction models that reliably recover high-quality 3D scenes.

Method

Method overview. (Left) Given an input image, Lyra 2.0 iteratively generates video segments guided by a user‑defined camera trajectory from an interactive 3D explorer and an optional text prompt, lifting each segment into 3D point clouds fed back for continued navigation. Generated video frames are finally reconstructed and exported as 3D Gaussians or meshes. (Right) At each step, history frames with maximal visibility of the target views are retrieved from the spatial memory. Their canonical coordinates are warped to establish dense 3D correspondences and injected into DiT via attention, together with compressed temporal history.