Falling Things: A Synthetic Dataset for 3D Object Detection and Pose Estimation

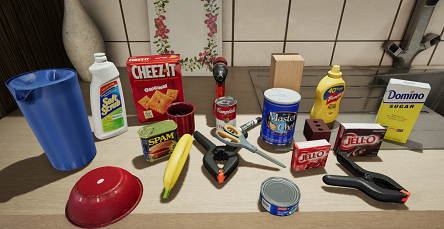

We present a new dataset, called Falling Things (FAT), for advancing the state-of-the-art in object detection and 3D pose estimation in the context of robotics. By synthetically combining object models and backgrounds of complex composition and high graphical quality, we are able to generate photorealistic images with accurate 3D pose annotations for all objects in all images Our dataset contains 60k annotated photos of 21 household objects taken from the YCB dataset.For each image, we provide the 3D poses, per-pixel class segmentation, and 2D/3D bounding box coordinates for all objects.To facilitate testing different input modalities, we provide mono and stereo RGB images, along with registered dense depth images.We describe in detail the generation process and statistical analysis of the data. Note: The dataset is available via the link below. See `readme.txt` below for a detailed description.

Publication Date

Research Area

External Links

Uploaded Files

Copyright

This material is posted here with permission of the IEEE. Internal or personal use of this material is permitted. However, permission to reprint/republish this material for advertising or promotional purposes or for creating new collective works for resale or redistribution must be obtained from the IEEE by writing to pubs-permissions@ieee.org.