EOE: Expected Overlap Estimation over Unstructured Point Cloud Data

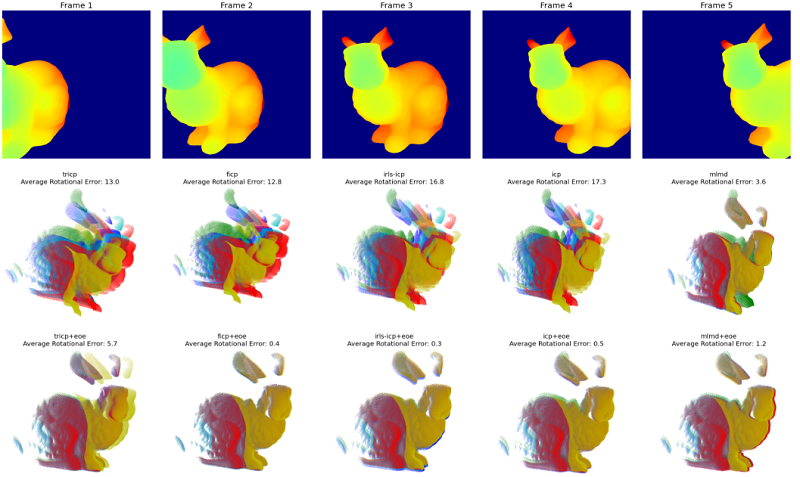

We present an iterative overlap estimation technique to augment existing point cloud registration algorithms that can achieve high performance in difficult real-world situations where large pose displacement and non-overlapping geometry would otherwise cause traditional methods to fail. Our approach estimates overlapping regions through an iterative Expectation Maximization procedure that encodes the sensor field-of-view into the registration process. The proposed technique, Expected Overlap Estimation (EOE), is derived from the observation that differences in field-of-view violate the iid assumption implicitly held by all maximum likelihood based registration techniques. We demonstrate how our approach can augment many popular registration methods with minimal computational overhead. Through experimentation on both synthetic and real-world datasets, we find that adding an explicit overlap estimation step can aid robust outlier handling and increase the accuracy of both ICP-based and GMM-based methods, especially in large unstructured domains and where the amount of overlap between point clouds is very small.

Publication Date

Published in

Research Area

External Links

Uploaded Files

Copyright

This material is posted here with permission of the IEEE. Internal or personal use of this material is permitted. However, permission to reprint/republish this material for advertising or promotional purposes or for creating new collective works for resale or redistribution must be obtained from the IEEE by writing to pubs-permissions@ieee.org.