Domain Stylization: A Fast Covariance Matching Framework towards Domain Adaptation

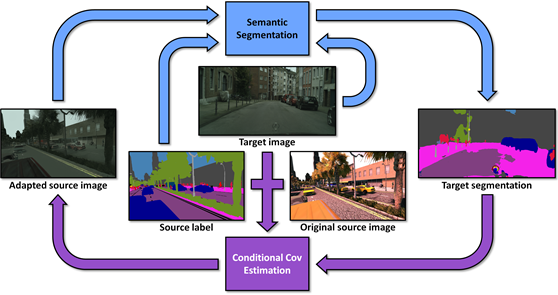

Generating computer graphics (CG) rendered synthetic images has been widely used to create simulation environments for robotics/autonomous driving and generate labeled data. Yet, the problem of training models purely with synthetic data remains challenging due to the considerable domain gaps caused by current limitations on rendering. In this paper, we propose a simple yet effective domain adaptation framework towards closing such gap at image level. Unlike many GAN-based approaches, our method aims to match the covariance of the universal feature embeddings across domains, making the adaptation a fast, convenient "on-the-fly" step and avoiding the need for potentially difficult GAN trainings. To align domains more precisely, we further propose a conditional covariance matching framework which iteratively estimates semantic segmentation regions and conditionally matches the class-wise feature covariance given the segmentation regions. We demonstrate that both tasks can mutually refine and considerably improve each other, leading to state-of-the-art domain adaptation results. Extensive experiments under multiple synthetic-to-real settings show that our approach exceeds the performance of latest domain adaptation approaches. In addition, we offer a quantitative analysis where our framework shows considerable reduction in Frechet Inception distance between source and target domains, demonstrating the effectiveness of this work in bridging the synthetic-to-real domain gap.

Publication Date

External Links

Copyright

This material is posted here with permission of the IEEE. Internal or personal use of this material is permitted. However, permission to reprint/republish this material for advertising or promotional purposes or for creating new collective works for resale or redistribution must be obtained from the IEEE by writing to pubs-permissions@ieee.org.