Visualizing and Communicating Errors in Rendered Images

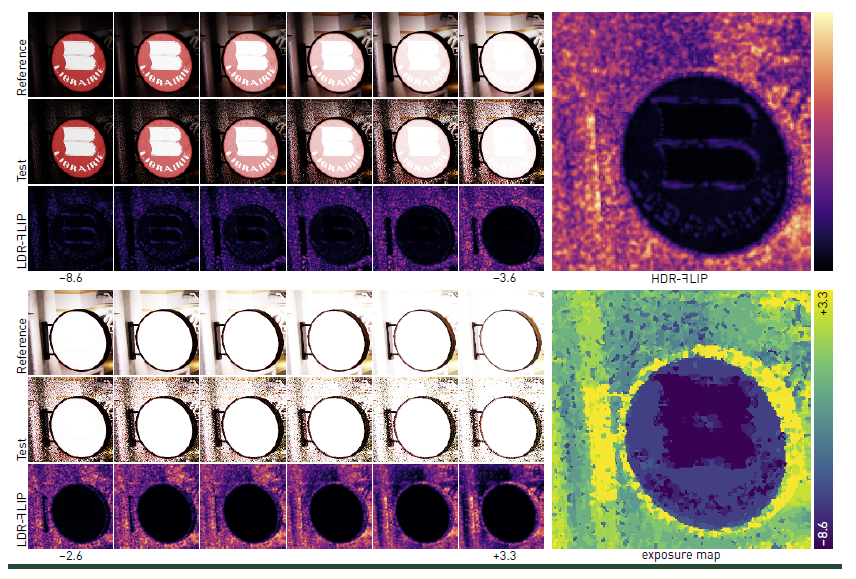

In rendering research and development, it is important to have a formalized way of visualizing and communicating how

and where errors occur when rendering with a given algorithm. Such evaluation is often done by comparing the test

image to a ground-truth reference image. We present a tool for doing this for both low and high dynamic range images.

Our tool is based on a perception-motivated error metric, which computes an error map image. For high dynamic range

images, it also computes a visualization of the exposures that may generate large errors.