Modeling and Analysis of Power Supply Noise Tolerance with Fine-grained GALS Adaptive Clocks

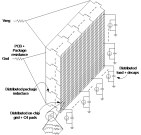

Power supply noise can significantly degrade circuit performance in modern high-performance SoCs. Adaptive clocking schemes have been proposed recently that can tolerate power supply noise by adjusting the clock frequency in response to fast-changing voltage variations. In this paper, we model and quantify power supply noise tolerance with a fine-grained globally asynchronous locally synchronous (GALS) design style together with an adaptive clocking scheme. An experimental setup that includes SPICE and Verilog-A models is used to quantify the effect of clock-tree insertion delay and spatial workload variations on power supply noise tolerance in both traditional synchronous adaptive clocking and a fine-grained GALS adaptive clocking scheme. Compared to the traditional scheme, fine-grained GALS adaptive clocking significantly reduces these effects and the margins required to tolerate power supply noise. The gain is quantified using the uncompensated voltage noise metric, which is defined as the additional voltage margin that is required for failure-free operation of circuits at the frequency dictated by the adaptive clocking scheme. In our experimental setup for a typical high performance SoC, fine-grained GALS adaptive clocking achieves a 78 mV saving in uncompensated voltage noise, which is an equivalent of 15% savings in power.

Publication Date

Published in

Research Area

Uploaded Files

Award

Copyright

This material is posted here with permission of the IEEE. Internal or personal use of this material is permitted. However, permission to reprint/republish this material for advertising or promotional purposes or for creating new collective works for resale or redistribution must be obtained from the IEEE by writing to pubs-permissions@ieee.org.