Tackling 3D ToF Artifacts Through Learning and the FLAT Dataset

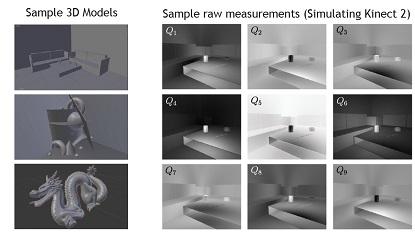

Scene motion, multiple reflections, and sensor noise introduce artifacts in the depth reconstruction performed by time-of-flight cameras. We propose a two-stage, deep-learning approach to address all of these sources of artifacts simultaneously. We also introduce FLAT, a synthetic dataset of 2000 ToF measurements that capture all of these nonidealities, and can be used to simulate different hardware. Using the Kinect camera as a baseline, we show improved reconstruction errors on simulated and real data, as compared with state-of-the-art methods.

Publication Date

Published in

Research Area

External Links

Uploaded Files

Paper6.04 MB

Supplementary material7.06 MB